On Tuesday, Anthropic announced Claude Mythos Preview and Project Glasswing, and if you work in cybersecurity, you should be paying close attention. Not with the panic of the headlines ("too dangerous to release," "could bring down a Fortune 100 company”), but with the sober recognition that the ground just shifted under us all.

And the shift favors defenders, if we're smart about it.

Let me start with what actually happened, because the real story is getting buried beneath a mountain of hype.

Anthropic built a general-purpose AI model that, as a side effect of being extraordinarily good at understanding code, turned out to be extraordinarily good at finding vulnerabilities in that code. Not surface-level vulnerabilities; not the kind of things a junior analyst flags in a SAST scan. We're talking about a 27-year-old denial-of-service bug in OpenBSD, an operating system whose entire identity is built around security. A 16-year-old flaw in FFmpeg's H.264 codec that survived over five million fuzzing passes without detection. A 17-year-old remote code execution vulnerability in FreeBSD's NFS server that grants unauthenticated root access to anyone on the network.

These aren't edge cases. They’re foundational pieces of infrastructure that billions of people depend on every day, and the bugs that lived inside them were invisible to every human reviewer and every automated tool that existed until, well, this week.

Rather than release Mythos to the public, Anthropic did something commendable: they restricted access and assembled a coalition. Project Glasswing brings together AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks as launch partners, with more than 40 additional organizations getting access. Anthropic is committing up to $100 million in usage credits and $4 million in direct donations to open-source security organizations, along with a straightforward mandate: use Mythos to find and fix vulnerabilities in the software the world runs on before models with similar capabilities become broadly available.

That window, by Anthropic's own estimate, is somewhere between six and eighteen months. The clock is ticking.

The bigger opportunity: Building better software

The vulnerabilities Mythos is uncovering aren't exotic and they aren't some radically new class of AI-discovered weakness. They're the same categories of flaws we've seen for decades — memory corruption bugs, integer overflows, authentication bypasses, race conditions. The 27-year-old OpenBSD bug is a perfect illustration: it's not that the bug itself was too difficult to find, it's that nobody had the right combination of scale, patience, and code comprehension to look in an enormous number of wrong places until they reached the right place.

But here's what excites me most about this moment. Finding yesterday's bugs is valuable, but what’s transformative is the possibility that AI like Mythos can help us stop creating them in the first place.

Think about what it means when a model deeply understands not just that a vulnerability exists, but why it exists — the patterns of logic, the assumptions baked into a code path, the subtle interactions between components that create exploitable conditions. That same depth of understanding can be applied upstream and embedded in the development process itself, catching dangerous patterns as code is being written, flagging architectural decisions that introduce risk, helping developers build with security as a default rather than an afterthought.

For decades the cybersecurity industry has been playing an expensive game of cleanup. We bolt on defenses downstream to compensate for insecure software built upstream. Every CISO lives this every day. What Mythos signals is a future where AI helps move security to the point of creation, where the same capabilities that can find a 27-year-old vulnerability can also prevent the next one from being introduced in the first place. Not overnight and not without human judgment, but the trajectory is clear, and it's the most promising shift I've seen in my career.

Why this matters for defenders today

For those of us who’ve spent our careers building security programs, the immediate reaction to Mythos might be dread. Great, another escalation in an arms race we're already losing.

I'll push back on that framing.

The defender's fundamental problem has always been asymmetry. Attackers need to find one way in, but defenders need to protect everything. And the challenge was never just finding the bugs but also remediating them. The hard part is understanding root cause, developing a fix, testing it, ensuring it doesn't break functionality, and deploying it across complex, interdependent systems.

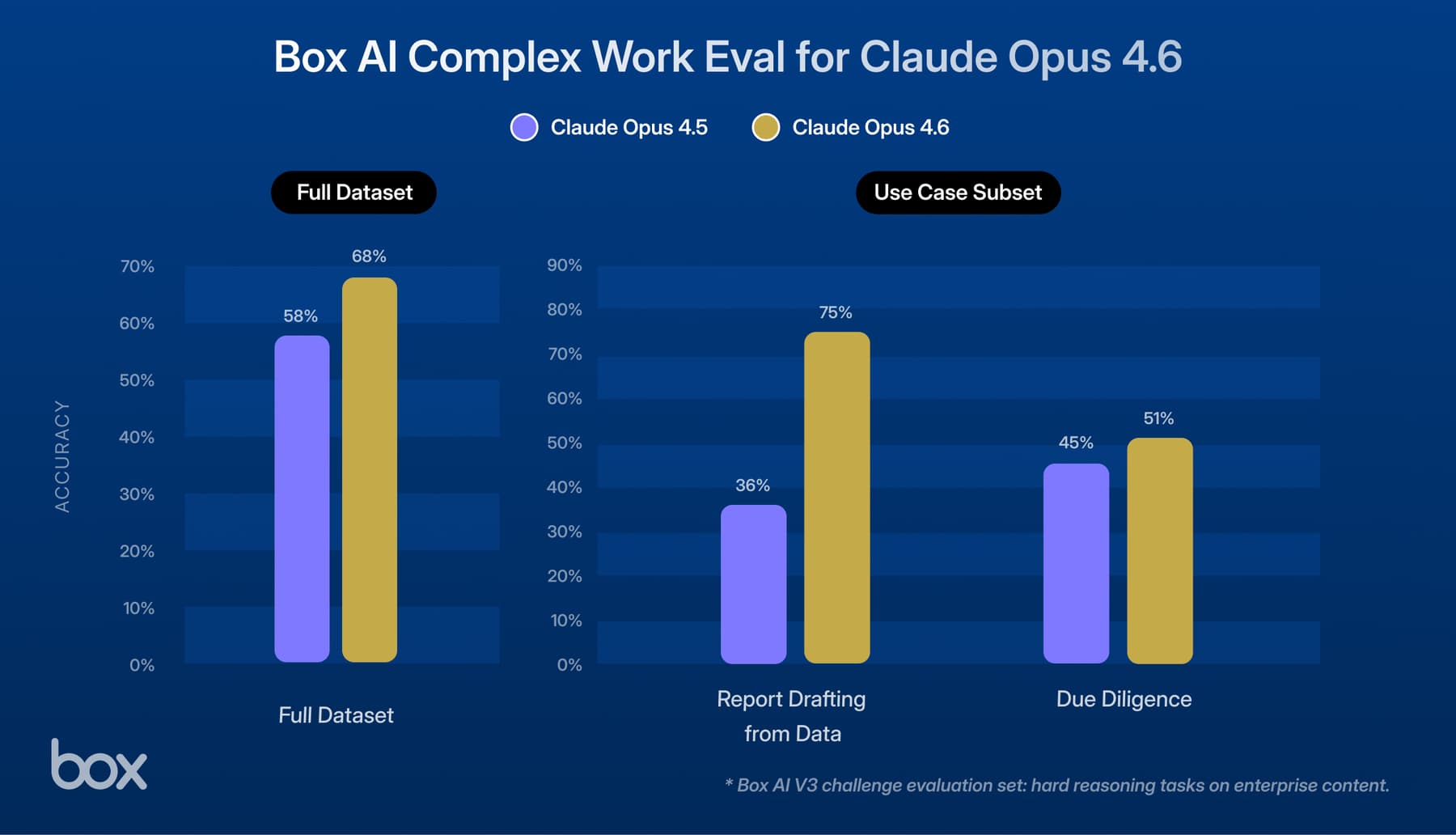

Mythos changes both sides of that equation. For the first time, defenders have access to a tool that can reason about code the way an elite security researcher does, but at machine scale and machine speed. Anthropic reports that engineers with no formal security training asked Mythos to find remote code execution vulnerabilities overnight and woke up to complete working exploits. The model doesn't just find bugs — it chains them. In one documented case, it linked four separate vulnerabilities into a browser exploit that escaped both renderer and OS sandboxes. Where its predecessor, Claude Opus 4.6, had a near-0% success rate at autonomous exploit development, Mythos achieved 72%.

But the real promise extends beyond discovery. AI of this caliber has the potential to assist with root cause analysis, generate candidate patches, prioritize risk, and accelerate testing and deployment. That doesn't make remediation automatic — fixing software at scale still requires engineers, operators, security teams, and business leaders to validate changes, manage risk, and preserve functionality. What it does is make that work faster, cheaper, and more manageable. The human stays in the loop, but the loop gets a lot tighter.

The tension I’m still wrestling with

I'll be honest: my first reaction to the multi-step exploit chains Mythos is building was skepticism. In the real world, the vulnerabilities that keep me up at night are rarely that sophisticated. They're misconfigured cloud storage, unpatched known CVEs, compromised credentials. The stuff that's boring and preventable and gets exploited every single day.

So when I see a demonstration of a four-vulnerability browser exploit chain, my CISO brain says, "Impressive, but how does this rank against the service account with admin credentials that's been sitting in our environment for months?"

What changed my thinking is the thought that the complexity of the exploit chain is less relevant when the entity constructing it is a machine that works around the clock. Anthropic's own assessment is that defense-in-depth measures whose security value comes primarily from friction rather than hard barriers become considerably weaker against model-assisted adversaries. The friction that protected us — the fact that chaining four vulnerabilities together took weeks of elite human effort — is precisely the kind of obstacle that AI can now eliminate. If Mythos can build these chains today, so will whatever model a nation-state adversary deploys six months from now.

And some of these findings aren't obscure at all in their impact. A remote crash affecting every OpenBSD server on the internet or a certificate authentication bypass in a major cryptography library are exactly the types of vulnerabilities that nation-state actors stockpile. The fact that the attack path evaded decades of expert review doesn't diminish the impact; it makes the discovery more valuable, not less.

I still believe that for most organizations, the boring fundamentals remain the highest-leverage security investment. But the ceiling of what counts as "realistic threat" just moved, and our threat models need to move with it.

What CISOs should be doing right now

Like every CISO reading this announcement, I'm already thinking about what it means for our own environment. The patch volume coming out of Glasswing over the next six months is going to be significant, and the organizations that understand their exposure to those dependencies ahead of time will be in the best position to act. Here's what I'd encourage every CISO to be thinking about.

- Revisit your threat models for AI-assisted exploitation. Multi-step exploit chaining is no longer a theoretical risk reserved for nation-state threat actors. If your risk assessments still treat complex attack paths as "low likelihood," those assessments are stale. Update them.

- Get ahead of the Glasswing patch wave. Anthropic will publish a report within 90 days. Expect months of high-volume patch releases across operating systems, browsers, and major libraries afterward. If your patching cadence is slow, this is the forcing function to fix it. Identify your most critical dependencies now so you're ready to act when disclosures start.

- Reach out to your vendors. If your key technology providers are Glasswing partners, ask them what they're finding and how it affects what you run. If they're not in the coalition, ask them what their plan is. This is a reasonable question for every CISO to put to their supply chain.

- Invest harder in the fundamentals. Secure-by-design principles, memory-safe languages, minimal attack surfaces, strong authentication, network segmentation — these become more important in an AI-augmented threat landscape, not less. The best defense against an AI that chains obscure vulnerabilities is not having those vulnerabilities.

The beginning, not the end

Anthropic's deliberate deployment approach — holding back capabilities, limiting access, focusing first on strengthening real-world systems collaboratively — is a rare sign of strategic seriousness in an industry that often moves fast and breaks things. It reflects an important recognition that how we deploy powerful AI matters as much as what it can do. And the Glasswing partner set, which includes systemically important institutions like JPMorgan Chase alongside the companies that build the operating systems and browsers the world runs on, shows an understanding that this is about strengthening the digital infrastructure that underpins the global economy, not just improving any single company's security posture.

The real significance of this moment isn't the vulnerabilities Mythos found but the future it points toward. One where AI doesn't just help us find and fix flaws faster, but helps us build software that has fewer flaws to begin with. Where security shifts from something we bolt on after the fact to something woven into the fabric of how software is created.

The capabilities demonstrated this week show we're closer than most people realize. And every CISO, every engineering leader, every organization that builds or depends on software should be asking themselves how they're going to be part of that shift.

AI found a bug that the entire industry missed for 27 years. That should make every CISO uncomfortable and optimistic in equal measure. What matters now is what we do with the head start.