The core problem we're solving

Traditional security architecture and design reviews are hitting a wall. They simply don't scale.

Modern systems are becoming more complex while security architecture teams remain relatively small. Distributed services blur trust boundaries, third-party integrations multiply, and threats evolve faster than documentation can keep up. Meanwhile, product development is accelerating. Feature velocity increases while security review capacity stays largely constant.

The result is predictable: architecture reviews become a bottleneck.

The traditional playbook relies on outdated approaches:

- Human-led STRIDE assessments that take weeks to complete

- Static review checklists that miss novel attack vectors

- Security sign-offs that arrive when code is already in production

Expertise bottlenecked in a handful of overworked architects

Over time, two insights have fundamentally reshaped how we think about security architecture.

First, review volume doesn’t equal risk reduction.

Performing more reviews doesn't make systems more secure; it often just produces more paperwork. The real challenge is ensuring meaningful coverage across designs, prioritizing exploitable risks, producing developer-ready guidance, and systematically eliminating recurring design weaknesses.

Second, the consumers of security guidance are no longer human.

The development lifecycle is being revolutionized by AI agents. Coding agents generate entire codebases. Review agents evaluate pull requests at superhuman speed. Testing agents probe systems and generate test cases at unprecedented scale. Security architecture output designed only for human consumption is a bottleneck in an agentic development workflow — the very problem we set out to solve.

If we don't fundamentally change how we approach security architecture and design reviews, they'll continue to scale linearly with headcount while engineering and product development accelerate at machine speed. That's a recipe for irrelevance.

Our vision: Security architecture at product velocity

Security architecture can’t be a downstream checkpoint. When meaningful risk is identified at design time, security guidance must arrive before code is written, not after implementation is already underway.

Like many security teams, we've spent years shifting architecture reviews earlier in the lifecycle through proactive engagement, clearer requirements, and engineering-ready guidance. That work remains essential, but the emergence of agentic development demands a more fundamental transformation. We're no longer building security architecture outputs for humans. We're building for agents.

Humans can read threat models, internalize the context, and apply judgment to ambiguous situations. An agent requires structured output that can be consumed directly by tools and automation across the entire development pipeline. Security architecture must evolve from human-readable reports to machine-consumable security intelligence.

Security architecture begins to move from a human-centric review process to a scalable, machine-consumable capability:

- Late-stage review → Early design engagement

- Security sign-off → Security requirements as structured input

- Advisory feedback → Agent-consumable, engineering-ready guidance

- Bottlenecked expertise → Scalable, agent-driven capability

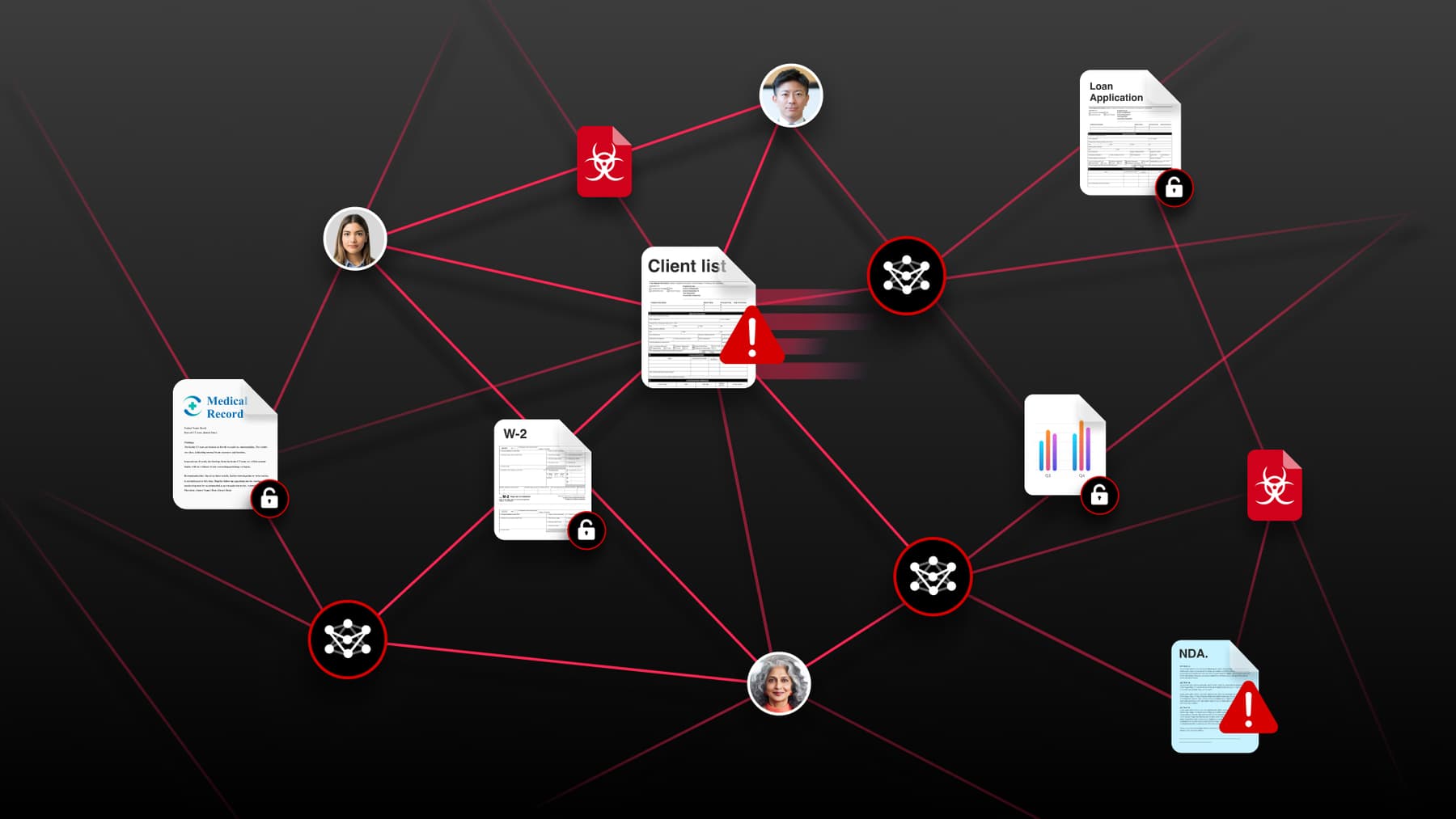

As software becomes increasingly agentic, another shift emerges: agents interacting with other agents.

These autonomous systems retrieve sensitive data, invoke tools, trigger workflows, and operate with delegated credentials across service boundaries. Security architecture must therefore evolve beyond analyzing static software systems to governing how autonomous systems interact.

This leads to a model where agents help secure other agents, under human-defined policy and oversight. AI systems analyze designs, enforce architectural constraints, and monitor how agents operate within defined trust boundaries. Security engineers define the policies, set the guardrails, and intervene when systems encounter novel or ambiguous risks.

The goal isn't autonomous security architecture; it's architecture guidance that both humans and agents can understand, enforce, and act on as systems evolve.

How we're approaching it

Turning this vision into reality requires rethinking how security architecture is produced, consumed, and applied across the development lifecycle. Here’s how we’re approaching it.

Automated STRIDE threat modeling

We've built a security design review agent that performs automated STRIDE threat modeling against design documentation. This agent analyzes design artifacts and generates:

- Feature summaries that capture essential functionality

- Architecture overviews mapping system components

- Trust boundary identification highlighting security perimeters

- Data flow analysis tracking sensitive information movement

- Sensitive asset mapping cataloging critical resources

- High-severity threat identification prioritizing real risks

- Concrete remediation guidance providing actionable fixes

With AI assistance, what traditionally required days of manual, expert-driven analysis now happens in minutes.

Design artifact ingestion at scale

Security architecture has always struggled with artifact sprawl. Design information lives scattered across PRDs, data flow diagrams, spreadsheets, meeting notes, and chat threads. Our agent supports diverse file formats and scans specified directories to analyze all supported files — eliminating manual copying, consolidation, or reformatting.

Security no longer depends on perfectly packaged documentation. It analyzes what already exists.

High signal, developer-ready analysis

The agent follows three principles:

- Focus on actionability: Surface findings developers and agents can implement immediately, avoiding abstract security theory

- Enforce conciseness: Skip lengthy explanations and produce high-density, clear output

- Prioritize rigorously: Emphasize critical and high-severity threats ranked by exploitability and impact

The result is structured, actionable guidance that’s ready for an agentic workflow.

When a security design review produces standardized trust boundaries, ranked threats, and concrete remediation steps, that output doesn't sit idle in a document queue. It becomes active input for:

- Coding agents that receive explicit security constraints and hardened implementation patterns before generating code, turning threat findings into guardrails rather than afterthoughts

- Review agents that evaluate pull requests against specific attack paths and trust boundary violations identified during design, rather than relying on generic rulesets

- Penetration testing agents that consume the threat model to focus efforts on the highest-risk attack surfaces, validating whether identified threats were actually mitigated

- SecOps agents that absorb security requirements to inform monitoring, alerting, and incident response configurations, closing the loop from design-time risk to runtime defense

This is a fundamental shift: security architecture output becomes a shared contract across the entire development lifecycle, a structured representation of risk that every agent in the pipeline can interpret and enforce.

What this changes operationally

These changes don’t just improve how we perform security architecture reviews; they fundamentally change how security operates within the development lifecycle.

Security guidance becomes living input, not static artifacts

In an agentic SDL, the threat model transforms into living input that actively shapes how code is generated, reviewed, tested, and monitored. When designs change, security analysis updates automatically — and every downstream agent immediately inherits the new context.

This eliminates one of security architecture's most persistent failure modes: the gap between what was recommended and what was actually built.

The review queue dissolves

Historically, security architecture reviews operated as a queue. Teams submitted designs, waited for an available security architect, received feedback, iterated, and eventually obtained sign-off. That model created friction, delayed releases, and concentrated risk in a small number of overloaded experts.

The traditional review queue begins to dissolve. Security analysis happens concurrently with design. Teams don't wait for security — it’s already embedded in the artifacts their agents consume.

Security architecture cognitive load drops

AI agents capable of handling routine tasks — standard threat models, common vulnerability patterns, structured output generation — free security architects from the volume treadmill. They no longer face the impossible choice between reviewing the next design in queue and spending a week deeply analyzing a novel authentication architecture or complex multi-tenant isolation boundary.

This increased capacity matters enormously. The hardest security architecture problems are creative work. These are problems that require deep context, adversarial thinking, and architectural judgment – areas where human expertise remains essential.

These problems include:

- How do you design trust boundaries for systems where AI agents operate with delegated credentials across service boundaries?

- What are the emergent attack surfaces when multiple agentic systems interact in ways their individual designers never anticipated?

- How do you architect isolation guarantees that hold under adversarial conditions no scanner will ever model?

Agents aren't solving these problems today. A security architect freed from routine review backlogs can.

Security architecture becomes composable

Threat models no longer exist as monolithic documents. They become a set of structured findings, constraints, and requirements that different agents consume in different ways.

Coding agents get implementation-level guardrails. Penetration testing agents get attack paths to validate. SecOps agents get detection signatures to deploy.

What's hard (and what we're working through)

This shift creates new opportunities, but it also introduces new challenges that we’re actively addressing.

General guidance versus organizational context

This represents one of the most subtle but consequential challenges in building an agentic SDL. A general-purpose threat model surfaces textbook risks: injection vulnerabilities, broken authentication, insecure deserialization. Most LLMs can identify these in a single pass, and while that’s useful, it's also just table stakes.

The real value of security architecture lies in organizational primitives that don't appear in any public framework:

- Internal trust boundaries your platform recognizes

- Specific authentication and authorization patterns your services rely on

- Data classification tiers governing how sensitive assets flow between components

- Infrastructure conventions defining what "secure by default" means in your environment

This context gap compounds quickly. The less organizational specificity an agent possesses, the lower the signal-to-noise ratio of its output. Engineers (and their agents) learn to ignore generic findings. Security teams waste cycles triaging false positives. The promise of agentic security — scaling expertise, not just volume — erodes.

Closing this gap requires deliberately encoding organizational security knowledge into artifacts that agents consume; not just "here are the threats," but "here are the threats given how we build, the patterns we recognize as safe, and the boundaries our architecture enforces."

That's the difference between an agent that produces security guidance and an agent that produces security guidance that matters.

Context boundaries matter

Security design review agents need access to comprehensive design artifacts, but downstream agents require appropriately scoped subsets of that analysis. A coding agent working on a single microservice shouldn't receive the full threat model for an entire platform. Getting context scoping right proves essential for both performance and accuracy.

Feedback loops are still forming

The full vision — where penetration testing results flow back to update threat models, which update coding agent constraints, which reduce future vulnerabilities — requires robust feedback infrastructure. We're building toward closed-loop learning, but the connective tissue between agents continues to mature.

Conclusion: a new operating model for security architecture

Security architecture is undergoing a structural shift.

For years, security teams have focused on producing guidance for human developers — documents, threat models, and design reviews that teams interpret and implement over time. That model worked when software development moved at a human pace.

But development workflows are changing. As coding agents, review agents, and testing agents become embedded across the software lifecycle, security architecture must evolve alongside them.

When threat models produce machine-readable, agent-consumable artifacts, security stops being something developers occasionally reference. Instead, it becomes structured security intelligence that flows directly into how systems are built, reviewed, tested, and operated.

This doesn’t eliminate the role of human security architects. It elevates it.

AI can scale routine analysis and generate structured guidance at machine speed. Human experts remain responsible for the work that requires judgment, creativity, and adversarial thinking — the complex architectural problems and novel attack surfaces that no agent can fully reason through today.

If Big Bet #1 changes how our SOC operates, Big Bet #2 improves the quality and adaptability of our detections, and Big Bet #3 reduces the underlying vulnerability exposure in our systems, Big Bet #4 moves even further upstream — embedding security intelligence directly into how systems are designed and built.

Security architecture stops being a bottleneck and becomes an integrated part of the development system itself.

That’s what security architecture at product velocity really means.

And it’s the foundation for the final shift in this series: continuously validating those defenses through AI-assisted penetration testing.