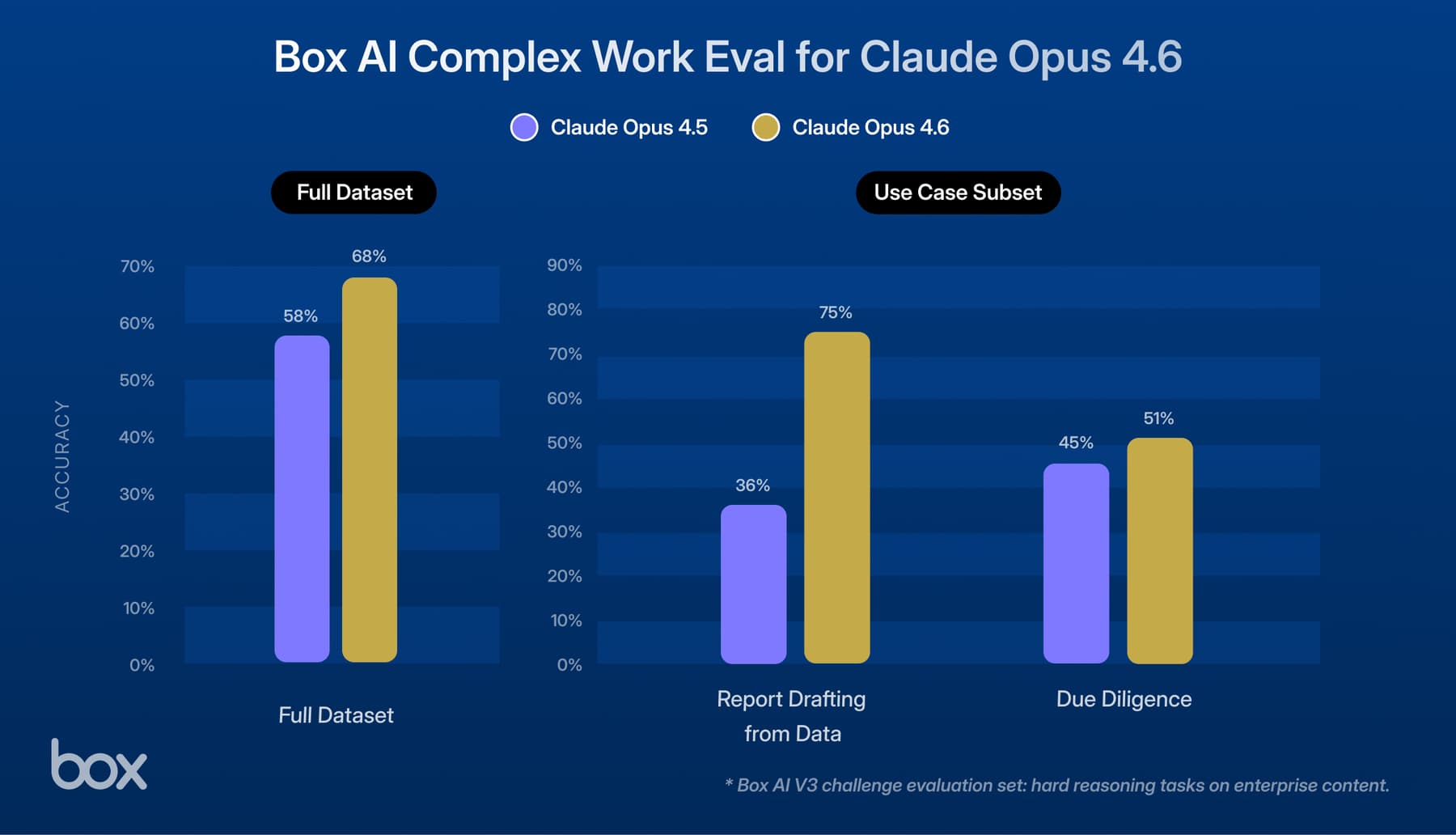

Today, Box is bringing Claude Opus 4.7 to our platform — and our early results show it's a significant step up in efficiency without compromising on the high standard of performance the Opus family is known for. We've been benchmarking Opus 4.7 against Opus 4.6 across agentic workflows, and the findings are striking.

As enterprise AI use cases grow in complexity, raw answer quality is no longer the only measure of a great model. What matters just as much is how efficiently a model can reason, call tools, retrieve information, and drive work to completion. In deep agentic scenarios — where tasks unfold across many steps and iterations — finding the fastest path to a high-quality answer is critical. Our testing shows that Claude Opus 4.7 makes meaningful strides on this dimension. Across every metric we tracked, it consistently required fewer model and tool calls, responded faster, and consumed fewer AI Units overall.

For all of the above metrics, we used the Box Complex Work Evaluation, which was deliberately designed to require heavy reasoning. These longer horizon tasks require analysis, report drafting, diligence, and verification across a wide variety of industries.

Fewer Model and Tool Calls

One of the clearest signals in our testing was the reduction in both LLM calls and tool calls, and what that means in practice is that Opus 4.7 gets to the right answer in fewer steps, with less overhead.

Claude Opus 4.7 averaged 7.1 LLM calls, compared with 16.3 for Claude Opus 4.6— a more than 2x reduction in model invocations to complete the same work.Tool call usage tells the same story: 9.4 calls on average for Opus 4.7 versus 18.8 for Opus 4.6. In both cases, the model is doing more with less.

This matters because in agentic systems, every unnecessary call adds latency, cost, and orchestration complexity. Opus 4.7 appears to reason more decisively — where Opus 4.6 would iteratively verify information across multiple passes, Opus 4.7 often reached the same conclusion in a single computation step. The result is a more streamlined path from user input to final answer, which at enterprise scale compounds into meaningful gains in speed and cost efficiency.

Lower Latency

Efficiency gains were also visible in latency metrics. For task duration, Claude Opus 4.7 completed tasks with a p50 latency of 183 seconds, versus 242 seconds for Claude Opus 4.6. That means users see the final output materially sooner, which improves overall user experience and utility from the agent.

In enterprise settings, that delta matters more than it might seem. When users are waiting on AI to complete multi-step knowledge work, every second of unnecessary latency erodes trust and limits how broadly these tools get adopted. Faster, more decisive reasoning doesn't just improve the experience — it makes agentic workflows practical at scale in a way that slower models simply can't match.

Lower AI Unit Usage

AI Unit efficiency told the same story. Box tracks the tokens consumed by an agent as “AI Units” so a more efficient model means every AI Unit goes further. Opus 4.7 used a median of 282 AI Units versus 401 for Opus 4.6 — a 30% reduction that flows directly to cost and scalability.

For enterprises running AI at high volumes, that gap compounds quickly. Fewer AI Units per task means lower spend per workflow, more headroom to handle complex tasks within context limits, and the ability to scale agentic use cases without a proportional increase in cost. Opus 4.7 doesn't just do the same work more efficiently — it opens the door to workflows that would have been cost-prohibitive before.

Why This Matters for Enterprises

Taken together, these results point to something more significant than incremental improvement. Across every dimension we measured — model calls, tool calls, latency, and AI Unit usage — Opus 4.7 did the same work more decisively and with less overhead, while holding the line on output quality. That is the combination that matters for enterprise AI: not efficiency at the expense of quality, but efficiency that runs alongside it.

A model that reaches high-quality answers in fewer steps, at lower latency, and with lower AI Unit consumption changes what's practical at scale. It means organizations can run more workflows for the same cost, tackle more complex tasks without hitting context or budget ceilings, and deploy agents that users actually trust because they're fast enough to feel responsive. Efficiency and quality are often framed as a tradeoff — our testing suggests Opus 4.7 challenges that assumption.

For Box customers, this translates directly into AI experiences that are more powerful and more practical. Faster task completion means less time waiting on agents to finish multi-step work. Lower AI Unit usage means greater scalability across the high-volume workflows that define enterprise AI in practice. And fewer model and tool calls mean less orchestration complexity — which matters as organizations push into more sophisticated, multi-agent architectures.

Get Started Today

Claude Opus 4.7 is available today in Box AI Studio and through the Box API.