The ground has shifted

AI is no longer just assisting attackers; it’s accelerating them.

Agent collectives now rank among the top performers on bug bounty platforms. Open-source frameworks can automate entire attack chains. And systems like those demonstrated in DARPA’s AI Cyber Challenge have shown that AI can find vulnerabilities in hours, not days.

The result: the time to exploit is collapsing, and the cost of launching sophisticated attacks is dropping.

Adversaries are no longer operating in bursts. They’re operating continuously.

This is the asymmetry we need to address.

The core problem we're solving

Traditional penetration testing provides valuable insight, but it suffers from a fundamental limitation: it’s episodic.

Organizations typically test their environments at specific points in time: before major releases, after significant changes, or during compliance cycles. But modern systems don’t operate on fixed schedules. They change continuously.

Services deploy daily. Infrastructure is ephemeral. Identity relationships evolve rapidly. Third-party integrations expand. AI agents are beginning to interact with systems autonomously.

By the time a penetration test report is delivered, the system it describes may already be different. The result is a fundamental mismatch: security validation happens periodically, while system risk evolves continuously.

Adversaries have already made the shift.

They aren’t waiting for scheduled testing windows. They’re probing environments continuously — generating exploits, exploring attack paths, and adapting in real time. AI has accelerated this even further, reducing the time to develop working exploits and enabling attackers to chain together multiple low- and medium-severity issues into high-impact attacks.

In this environment, point-in-time penetration testing provides only a partial view of risk. It tells you what was exploitable at a moment in time, not what is exploitable now.

As systems grow more complex, human-led penetration testing doesn’t scale. Highly skilled offensive security practitioners remain one of the scarcest resources in cybersecurity. Their time is best spent investigating novel attack paths and complex architectural weaknesses, not repeatedly testing routine controls.

Penetration testing isn’t the problem, the point-in-time model is.

Our vision: Continuous adversarial validation

At Box, we believe penetration testing needs to evolve from episodic engagement to continuous validation, because the adversary has already made that transition.

AI makes it possible to move from periodic testing to continuously evaluating systems for real world exploitability. In this model, AI agents simulate adversarial behavior across the environment:

- Enumerating attack surfaces

- Testing authentication and authorization boundaries

- Probing API behaviors

- Exploring privilege escalation paths

- Validating trust boundaries

This represents a fundamental shift:

Episodic testing → Continuous validation

Point-in-time findings → Real-time exploitability

Human-only testing → Human + agent collaboration

Periodic assurance → Persistent adversarial pressure

If attackers are operating at machine speed, our defense has to match that tempo. Continuous adversarial validation is how we close that gap.

Rather than replacing human penetration testers, these agents expand the surface area that can be evaluated continuously. AI agents provide continuous coverage across the environment, while human experts focus on the areas that require creativity, intuition, and deep adversarial thinking.

This creates a new operating model: AI continuously probes for known classes of weaknesses and emerging misconfigurations, while humans design testing strategies, investigate ambiguous findings, and validate novel attack chains.

The result isn’t automated penetration testing; it’s continuous adversarial validation.

How we're approaching it

Our approach combines agentic AI with human expertise to continuously evaluate exploitability across the attack surface. Rather than replacing traditional penetration testing, this model augments the work of our offensive security team by continuously probing the environment for weaknesses between offensive operations.

Building a living attack surface

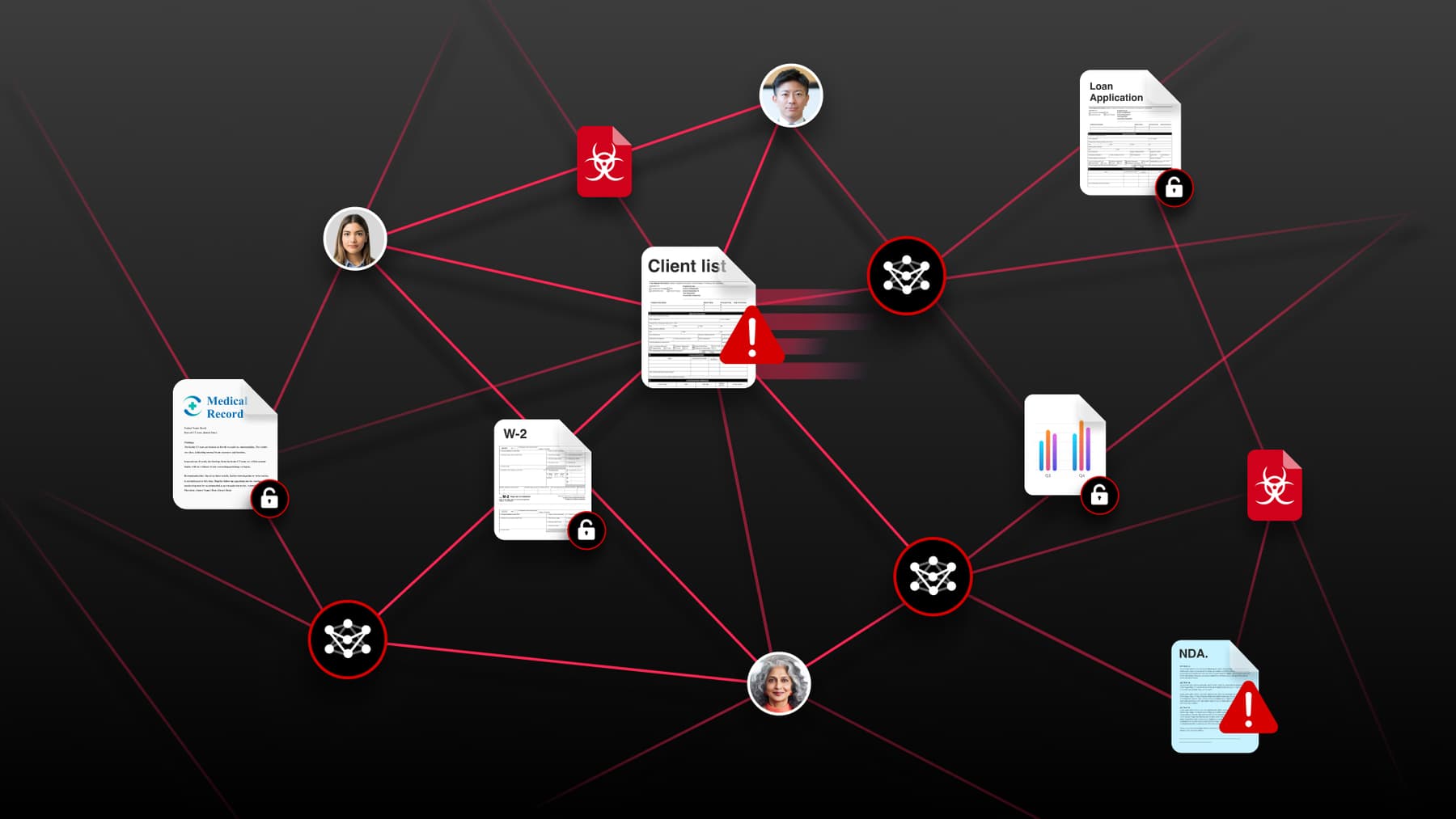

Continuous adversarial validation begins with understanding the full attack surface. Modern environments contain thousands of potential entry points across applications, APIs, identity systems, and infrastructure.

Rather than relying solely on static inventories, we focus on continuously mapping the environment in order to identify:

- Exposed services and APIs

- Authentication and authorization boundaries

- Identity relationships and permissions

- External integrations and dependencies

- Sensitive data flows

This creates a living representation of the attack surface that can guide adversarial testing. For offensive security teams, this mapping becomes a starting point for identifying where meaningful attack paths may exist.

Maintaining system context

Understanding the attack surface isn’t a one-time exercise. Modern environments evolve constantly as services are deployed, infrastructure is reconfigured, and permissions change. Continuous adversarial validation requires maintaining an up-to-date understanding of the system's structure, including:

- Service relationships

- Trust boundaries

- Identity and privilege models

- Network exposure

- Data access paths

This contextual understanding allows testing systems to evaluate how an attacker might move through the environment, rather than simply identifying isolated vulnerabilities. Human penetration testers can then focus their expertise on the areas where the architecture presents the greatest risk.

Given that AI-powered adversaries are now capable of chaining low-severity findings into high-impact attack paths, this holistic view of the environment is more important than ever.

Testing on change, not on schedule

The attack surface changes every time a system changes. New deployments, configuration updates, and identity changes can introduce new attack paths even when the underlying code remains secure.

Instead of relying on fixed testing schedules, continuous adversarial validation focuses on testing when meaningful changes occur. Signals that may trigger validation include:

- New service deployments

- API modifications

- Infrastructure configuration changes

- Newly exposed endpoints

- Identity or permission updates

By reacting to changes in the environment, adversarial testing stays aligned with the current system state, instead of the architecture that existed during the last pentest.

This is a direct answer to the adversary's continuous posture: we don't wait for a scheduled window, we test when the environment changes, because that's when new attack paths are most likely to emerge, and when adversaries are most likely to find them first.

Validating real attack paths

A major challenge with traditional security tooling is distinguishing between theoretical vulnerabilities and issues that can actually be exploited. Our approach emphasizes validating exploitability through agentic testing.

AI agents simulate attacker behavior to determine whether an issue can be leveraged to produce a real attack path. This may include:

- Testing authentication flows

- Attempting privilege escalation

- Chaining weaknesses across services

- Reproducing exploit conditions in the application

Rather than simply reporting potential vulnerabilities, the system attempts to confirm whether those issues create viable attack paths in the environment. This dramatically improves signal quality and ensures remediation efforts focus on findings that meaningfully increase exposure.

This matters even more in the AI adversary era. When attackers can automatically chain medium and low-severity vulnerabilities into critical attack paths, we need our own validation systems to think the same way — not just flagging individual issues, but evaluating whether they can be combined into something dangerous.

Human offensive security experts remain essential in this process: validating complex attack chains, investigating ambiguous results, and applying adversarial reasoning that automation cannot replicate.

Human oversight and expert validation

Despite advances in AI-driven testing, human expertise remains essential. Human security experts:

- Validate findings

- Investigate complex exploit chains

- Refine testing strategies

- Ensure testing remains safe and controlled

AI provides the scale and persistence of testing, while humans provide the judgment and adversarial creativity that machines cannot replicate.

What this changes operationally

The shift to continuous adversarial validation changes the operational rhythm of the security team in fundamental ways.

From calendar-driven to signal-driven

Security validation is no longer scheduled on a temporal basis (e.g. weekly, monthly, quarterly, annual etc.). It’s triggered by changes in the environment — deployments, configuration updates, new integrations. The team responds to signals, not calendars.

Living risk postureinstead of snapshots

Instead of a pentest report that reflects a moment in time, the team has a continuously updated view of exploitability across the attack surface. Risk posture is a living document, not a periodic deliverable.

Validation instead of triage

Rather than waiting for a vulnerability scanner to flag an issue and then triaging it, the team is continuously validating whether known weaknesses can actually be exploited. This is especially critical given that AI adversaries are now chaining low-severity findings into high-impact attacks.

Amplified human expertise

Human offensive security practitioners are among the most valuable and scarce resources in the industry. Continuous AI-driven validation frees them from routine coverage work and focuses their expertise on the novel, complex attack paths that require genuine adversarial creativity.

What's hard (and what we've learned)

This isn’t a solved problem. Continuous adversarial validation is a fundamentally different operating model and it introduces new challenges across signal quality, safety, and system context

Signal vs. noise

AI-driven testing can generate a high volume of findings. Without validation, this quickly becomes another source for noise. The differentiator is exploitability. It’s not enough to identify potential issues, the system must determine whether they form viable attack paths in the real environment. Prioritization only works if findings reflect actual risk, not theoretical exposure.

Safe testing in production-adjacent environments

Adversarial testing that is too aggressive can cause unintended disruption. Defining safe boundaries is critical: what can be tested autonomously, what requires human approval, and how to ensure testing remains controlled while still being realistic. This is an ongoing calibration between coverage and operational safety.

Keeping pace with the adversary's tooling

The AI-powered offensive tooling landscape is evolving rapidly. Our defensive and validation tooling needs to evolve at a comparable pace. This isn’t a system you build once. It requires ongoing investment to ensure capabilities evolve alongside the adversary.

Chaining vulnerabilities at scale

AI adversaries are now capable of chaining medium and low-severity vulnerabilities into high-impact attack paths. Teaching validation systems to think in chains, not just individual findings, is a harder problem than point-in-time exploit validation, and one we’re still actively working through.

Context determines quality

Like security engineers, AI does best when it has context into code, design, and other artifacts. Without that context, findings become generic and low-value. With it, validation becomes significantly more precise and more aligned with real-world risk.

Closing the loop

Big Bet #5 answers the final question: Do our defenses hold up under attack?

The goal isn’t just to find vulnerabilities — it’s to continuously validate real-world exploitability as systems evolve.

Continuous, AI-assisted penetration testing allows security teams to evaluate systems the way attackers do — dynamically, persistently, and across the full attack surface.

This isn’t about replacing human penetration testers; it's about extending their reach. AI agents can continuously probe environments for weaknesses, while human experts focus on the novel attack paths and architectural problems that require deep adversarial thinking.

If Big Bet #1 changes how our SOC operates,

Big Bet #2 improves how we generate detections,

Big Bet #3 reduces vulnerability exposure,

and Big Bet #4 scales security architecture across development…

Big Bet #5 validates that those defenses actually hold.

Together, these shifts begin to form a closed-loop system:

- Design identifies potential risk

- Detection identifies emerging threats

- Vulnerability management reduces exposure

- Penetration testing validates that defenses actually hold

Instead of reacting to failures, we’re moving closer to continuously proving that our systems remain secure as they evolve.