Cybersecurity is a race against time, and traditional detection engineering struggles to keep pace.

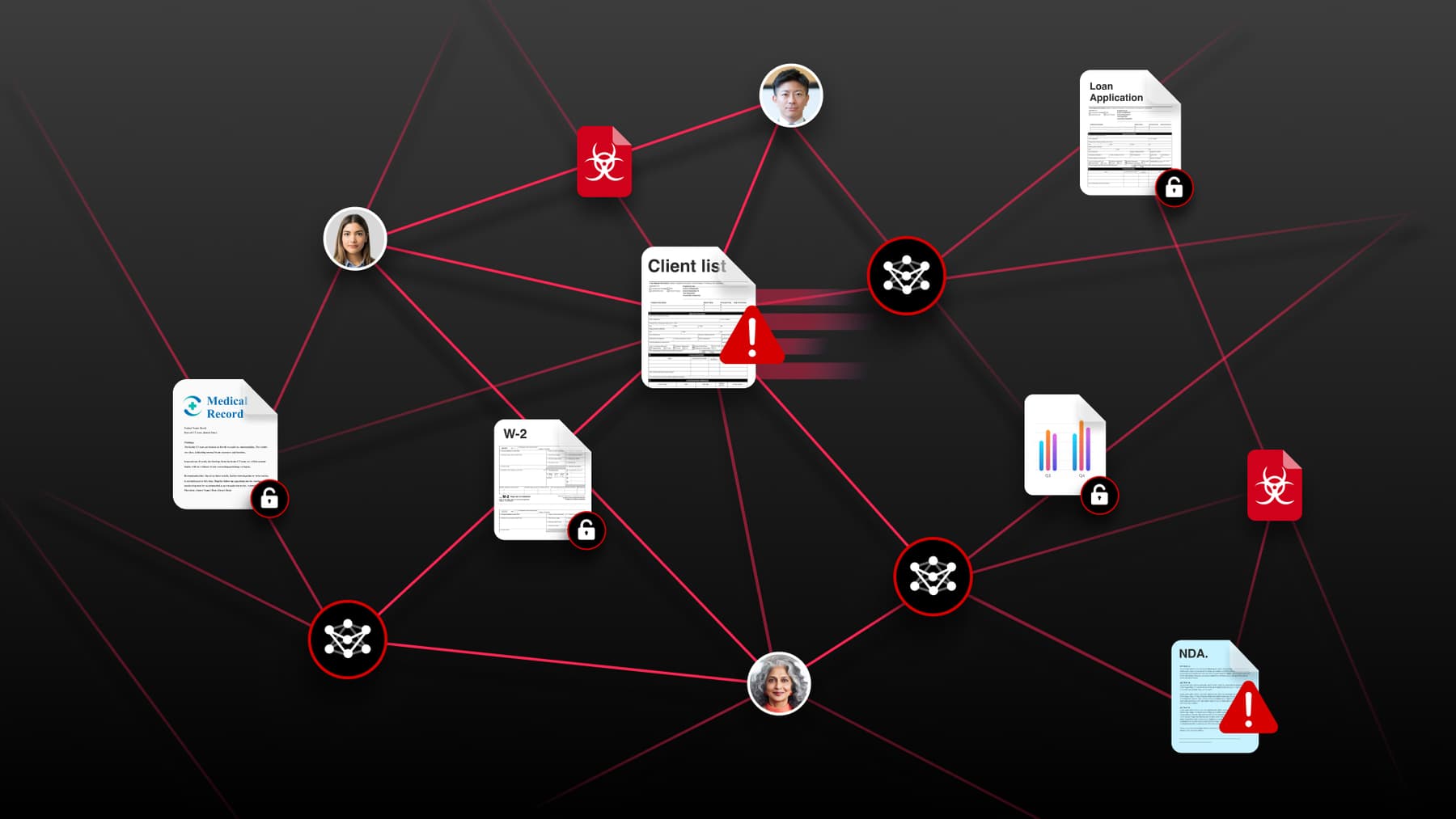

Security teams face enormous numbers of threat reports, blogs, advisories, and indicators of compromise (IOCs). Intelligence is fragmented across PDFs, Slack threads, and tickets. The human bottleneck is real, and adversaries iterate faster than manual detection cycles ever could.

The traditional cycle (reading reports, manually extracting indicators, mapping to MITRE ATT&CK, writing detection logic, and validating the detections) can take days or even weeks, and often ends with static IOCs that don’t keep up.

So at Box we’re making a big bet — we’re building a continuously learning detection ecosystem.

The vision: Near real-time detection engineering

We’re building a system where AI acts as a force multiplier across the entire pipeline:

Ingest → Understand → Translate → Detect → Validate → Improve

The goal isn’t just faster detection creation, it’s a continuously learning detection ecosystem. Here’s how we’re approaching it:

- Ingesting intelligence: AI agents ingest public threat research, vendor reports, government advisories, and ISAC feeds. Instead of analysts reading everything manually, AI extracts TTPs, identifies relevant MITRE techniques, normalizes IOCs, highlights novel attacker behaviors, and flags overlap with our environment.

- Determining environment relevance: Not every threat matters to Box. AI helps us prioritize threats according to what’s important for our environment — moving from generic threat feeds to context-aware threat intel.

- MITRE ATTACK gap analysis: New intelligence is continuously mapped against our existing detection coverage to identify blind spots, weak signal areas, and high-risk techniques that lack sufficient coverage.

- Automated detection drafting: Threat intelligence is translated directly into SIEM queries and detection logic to automatically build new detections. Analysts that used to have to write detections now simply need to review and refine.

- Automated testing and validation: Drafted detections arevalidated against historical logs, evaluated for false positives, and tuned before deployment.

What this changes operationally

We’re moving from reactive to proactive, from static IOCs to behavioral patterns. What used to take days of engineering will now take minutes of human validation.

The real shift isn’t technical. It’s operational.

When intelligence, detection drafting, validation, and feedback are connected into a single loop, the entire detection function changes.

Here’s where that shows up:

Detection coverage becomes continuous: Instead of periodic detection updates tied to incidents or analyst bandwidth, coverage is evaluated continuously against new intelligence.

We expect to see:

- Improved MITRE ATTACK coverage against high risk tactics

- Fewer uncovered techniques over time

- Faster identification of blind spots

- Reduced reliance on incidents to reveal gaps

Coverage becomes something we can observe improving, not something we assume.

Detection engineering throughput increases without sacrificing rigor: Separately, the mechanics of building detections change. What used to require days of manual effort (— reading reports, extracting indicators, drafting detections) shifts to structured human review and validation.

We expect to see:

- Reduced time from intelligence alerts to detection deployment

- Shrinking backlog of unoperationalized threat intelligence

- More consistent and predictable detection release cycles

Detection coverage is driven by intelligence, not limited by analyst bandwidth.

- Signal quality improves upstream: Detections are drafted with context and validated before deployment.

This means:

- Higher signal-to-noise ratios

- Fewer noisy detections reaching production

- Reduced time spent tuning post-deployment

- Analyst time shifts to higher value work: As manual detection development decreases, our detection engineers spend more time on higher-value tasks like:

- Behavioral modeling

- Adversary chain analysis

- Detection stress testing

- Identifying subtle blind spots

We expect to see a measurable shift in how detection engineering time is allocated, from manual coding and validation to strategic refinement.

What we’re working through

We’re still early in this journey, and there are meaningful challenges we’re actively working through.

- Translating intelligence into detection logic isn’t deterministic: Threat reports describe intent and behavior in narrative form. Translating that into executable detection logic requires interpretation. AI can misread nuance, overgeneralize technique mappings, or collapse distinct behaviors into overly broad rules. MITRE ATT&CK alignment, in particular, can look correct on paper but misrepresent the actual detection semantics.

- Behavioral detections require context the model may not fully understand: Writing a detection isn’t just about querying a log source. It requires knowing which fields are reliable in our environment, what enrichments are consistently available, what “normal” looks like at our scale, and how our identity and architecture actually behave. Without strong operational grounding, AI-generated detections can be technically sound but operationally brittle.

- Validation must be as rigorous as human-developed detections: Automatically engineered detections still need field validation, backtesting against historical logs, false positive measurement, and noise modeling. If AI speeds up creation, but not validation, you simply accelerate bad detections.

- Integration complexity increases systemic risk: We’re integrating multiple previously unconnected systems — ingestion pipelines, intelligence normalization, MITRE ATT&CK mapping, detection validation, SIEM validation, and testing frameworks. These systems weren’t originally designed to operate as one continuous loop, and this increases complexity.

- Overconfidence at scale is dangerous: If the system generates detections that appear structurally sound but are poorly tuned, you don’t just create noise, you erode analyst trust. And trust decay is one of the fastest ways to stall adoption.

Compounding learning requires feedback loops: A continuously learning system requires structured feedback— which detections generated noise, which were valuable, which techniques were already covered, and which mappings were weak. Without a deliberate feedback system, you don’t get a continuously learning ecosystem; you get automation without improvement.

Why this still matters

Despite the challenges, we believe this work is foundational. If adversaries are iterating in near real time, detection engineering cannot remain manual and reactive.

Big Bet #1 transforms our SOC with AI-driven detection and response.

Big Bet #2 ensures what reaches the SOC is smarter, more relevant, and higher signal.

They reinforce each other, and together, they redefine how a modern security operations program adapts.