Everyone is talking about AI in security. But where is it actually transformational?

At Box we've identified five big bets — areas where we believe AI fundamentally changes how a modern security program operates:

- The SOC

- Threat intelligence and detection engineering

- Vulnerability management

- Security architecture and design

- Penetration testing

These aren't side experiments. They're structural shifts.

Over the next few posts, I'll share how we're approaching each one — what's real, what's hard, and what's working.

First up: the SOC.

Big Bet #1: AI transforms the SOC

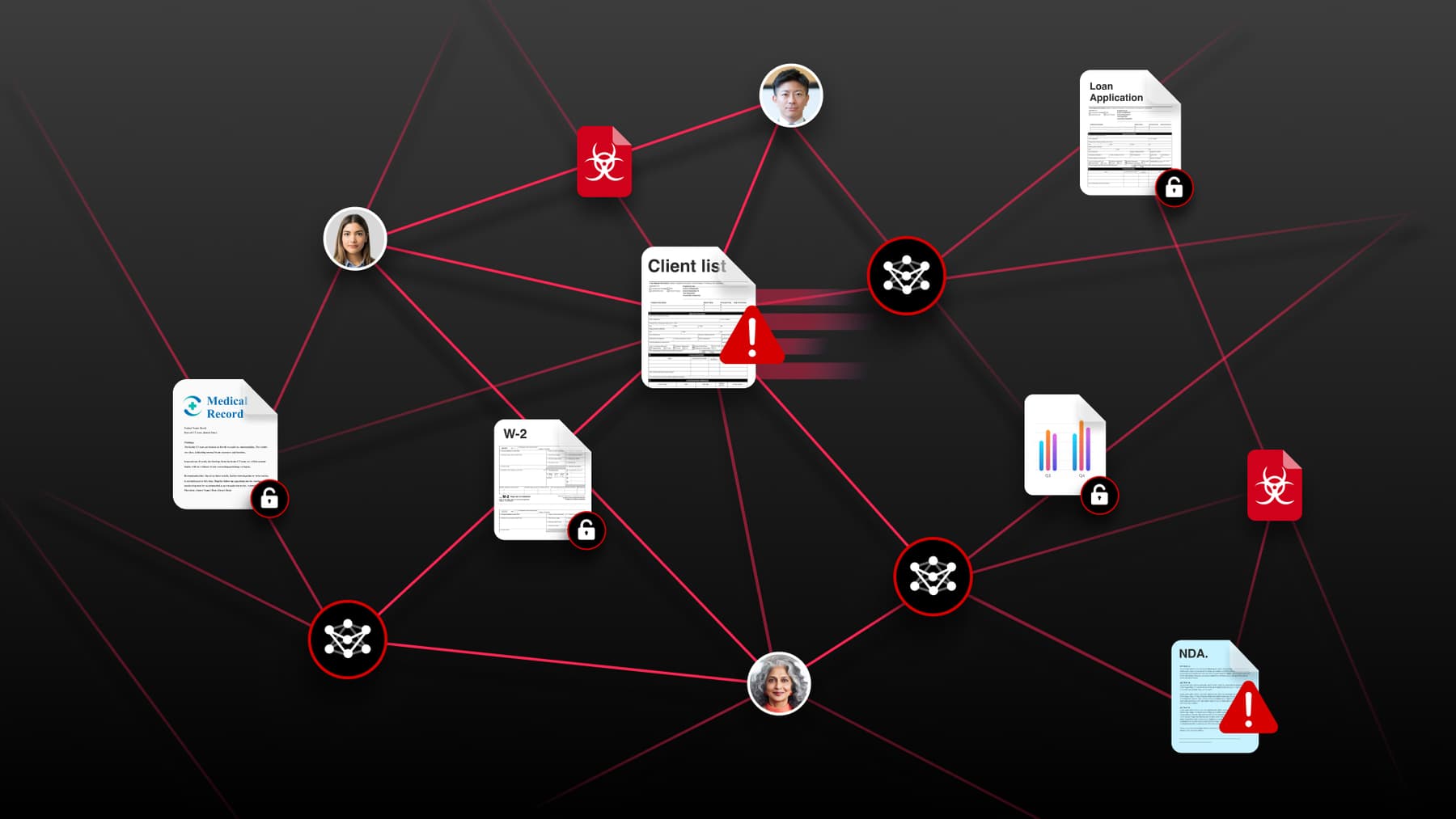

Box’s Security Operations Center (SOC) is where the real work of cybersecurity happens.

These are the people who build alerts and detections, monitor dashboards for anomalous activity, and serve as front-line first responders if something goes wrong.

Today, most SOC teams are trapped in a reactive loop — when an alert fires, an analyst pivots into logs, triages, correlates logs across various sources, escalates, then moves on to the next ticket in an ever-growing queue. It’s essential work, but it’s not the best use of these highly skilled employees.

For 2026, this is our biggest AI transformation bet. We aren’t just adding AI to existing operations — we’re redesigning how our SOC operates.

From alerts to autonomous action

Our goal is simple, but ambitious: move from a model where analysts spend a majority of their time reacting to alerts to one where they spend at least 80% of their time on proactive, high-value security engineering — detection tuning, behavioral analytics, and threat hunting.

To do that, we’ve moved beyond just using AI as an assistant.

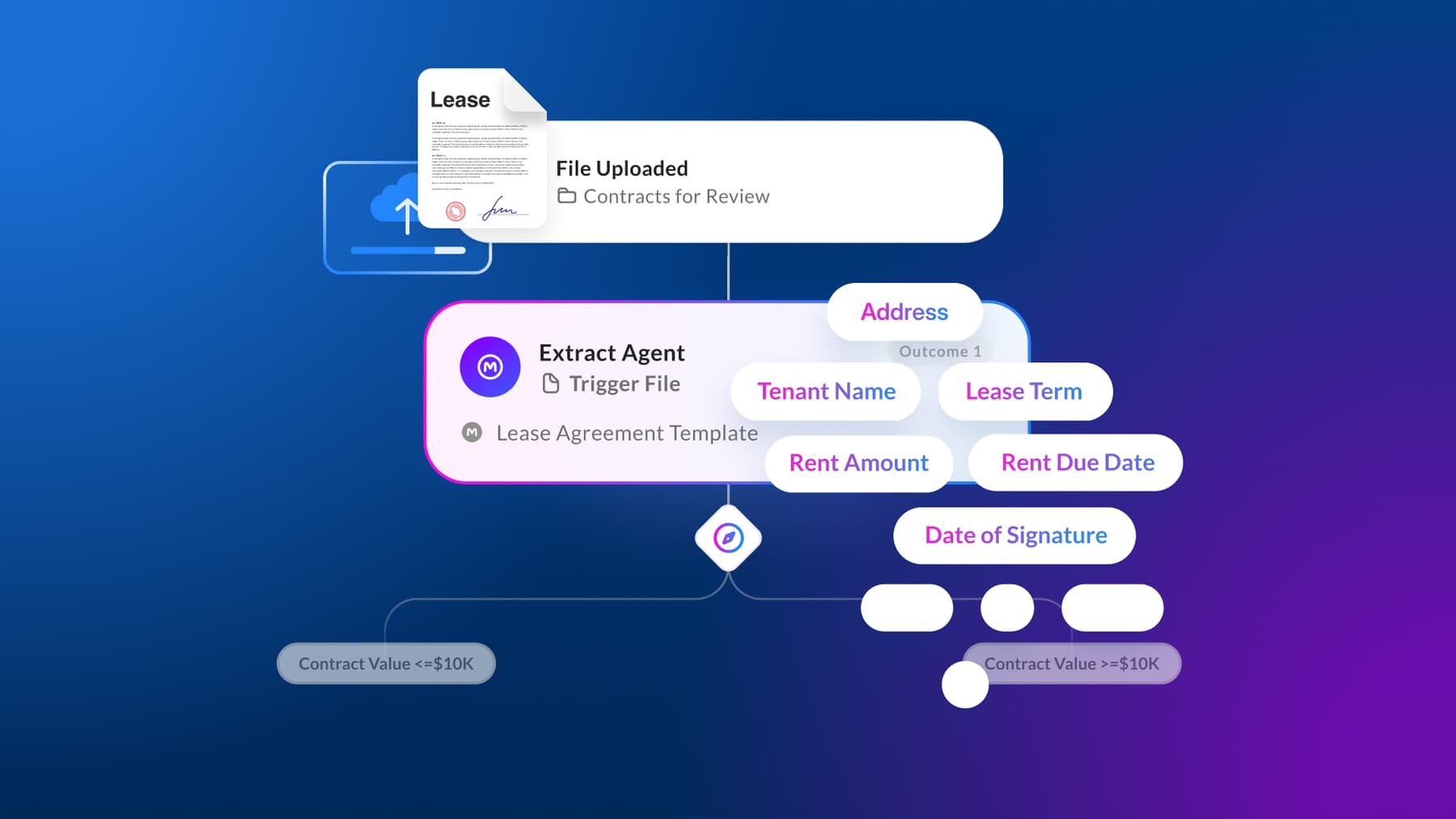

Today our SOC agent automatically executes predefined runbooks for approximately 38% of incoming events — the routine, lower-risk cases that make up the bulk of our alert volume. The agent:

- Executes containment or investigative steps

- Correlates supporting telemetry

- Applies decision logic based on policy

- Escalates or recommends closure

Always with a human in the loop. The difference is that the human is now reviewing synthesized conclusions instead of stitching together raw logs.

Automated enrichment, deeper insights

But executing predefined runbooks is just the beginning. For more than half of all events that are escalated to Tier 1 or Tier 2, the SOC agent performs automated enrichment:

- Pulling data from multiple log sources

- Correlating signals across identity, endpoint, and cloud telemetry

- Surfacing the most relevant context

- Presenting a structured investigative summary

Consider a simple example: a network ping.

On its own, this ping isn’t inherently malicious. But traditionally a human analyst would need to pivot across tools to determine whether it’s benign, suspicious, or part of a broader pattern. Now our AI agent performs that correlation in seconds — checking historical behavior, asset context, user activity patterns, and related signals — and presents a reasoned assessment.

This removes the mechanical investigative work and preserves human judgment for ambiguous or high-risk scenarios.

The hard stuff, and what it taught us

This transformation wasn’t as simple as “add AI.” We encountered real limitations that required architectural changes.

Structure compliance didn’t equal analytical accuracy

Even when the model reliably returned valid JSON, it could still fabricate conclusions inside that structure. Format validation alone didn’t prevent invented SPF/DKIM/DMARC results or unsupported claims.

Incomplete data triggered hallucinations

When authentication headers were partial or missing, the model sometimes tried to “complete the picture,” inferring pass/fail results or policy details that weren’t present instead of just stating unknown.

We had to explicitly engineer for uncertainty.

Required fields incentivized fabrication

When outputs required fields like findings, IOCs, and recommended next steps, the model sometimes generated filler content rather than acknowledging the absence of evidence.

This created noise and undermined analyst trust.

IOC inflation created operational drag

Early outputs occasionally labeled benign IPs or domains as suspicious without strong header-based indicators. That increased analyst workload instead of reducing it.

Trust is fragile in a SOC. Once noise creeps in, adoption slows.

Overconfidence required containment

The model sometimes produced high-confidence verdicts based on limited or ambiguous evidence. In an automated workflow, that level of certainty can trigger downstream actions.

Guardrails were essential.

We had to engineer determinism — not creativity

The breakthrough came when we stopped treating the prompt like an assistant and started treating it like a decision engine.

We enforced strict order-of-operations.

We required observable evidence before conclusions.

We stopped evaluation at the first suspicious condition.

We explicitly prohibited inference beyond the headers.

The prompt became less conversational and more like policy logic.

In effect, it became a control mechanism — constraining assumptions, requiring observable signals, and making the model’s reasoning auditable and defensible.

Rather than replacing our operational maturity, AI amplified it.

What this changes operationally

For practitioners and leaders, this is not just automation — it changes core SOC metrics:

- Reduced alert fatigue

- Lower MTTR on routine cases

- Higher signal-to-noise ratio

- More analyst time spent on detection engineering

- Faster feedback loops between investigation and rule tuning

It also allows us to reinvest analyst capacity into proactive coverage rather than scaling headcount linearly with alert volume.

Elevating people, not replacing them

Security operations carries the heaviest operational burden in most programs, which makes it a natural starting point for AI transformation.

But this isn’t about replacing analysts. It’s about shifting their time from:

- Log correlation

- Repetitive enrichment

- Deterministic decision trees

To:

- Advanced behavioral detection design

- Threat-informed hunting

- Detection engineering informed by real-time intelligence

The sophisticated, adversarial thinking work still requires human judgment.

AI handles the mechanical. Humans handle the ambiguous.

That's the future we're building toward.