We're witnessing a fundamental shift in autonomous agents like OpenClaw that represent something entirely new — not chatbots that answer questions, but delegates that act on our behalf, browsing the web, executing code, and managing our digital lives with unprecedented autonomy.

But there’s a problem: the very feature that makes these agents so powerful — their persistent memory — is also their greatest vulnerability. When that memory lives in unmanaged local files, you've essentially created a black box with a tempting target painted on its side.

To bridge the gap between viral innovation and enterprise-grade safety, we need to fundamentally rethink how we handle agentic memory and give these agents a "cognitive vault" — a secure, governed layer that actually protects the agent's brain.

Anatomy of a "security nightmare"

To understand the risk, we have to look at how these open-source agents actually "remember." Most autonomous frameworks, including OpenClaw, use an elegant but inherently insecure approach: they store their long-term memory in human-readable Markdown files like MEMORY.md and SOUL.md directly on your local system. While this simplicity is great for transparency, it creates a massive, unprotected attack surface.

Cybersecurity researchers have already identified "memory poisoning" attacks where malicious "skills" — essentially third-party plugins — inject instructions directly into these Markdown files. The result? Agents that have been brainwashed to exfiltrate data or bypass safety protocols entirely. When your agent's brain is just an unprotected text file, compromise is inevitable.

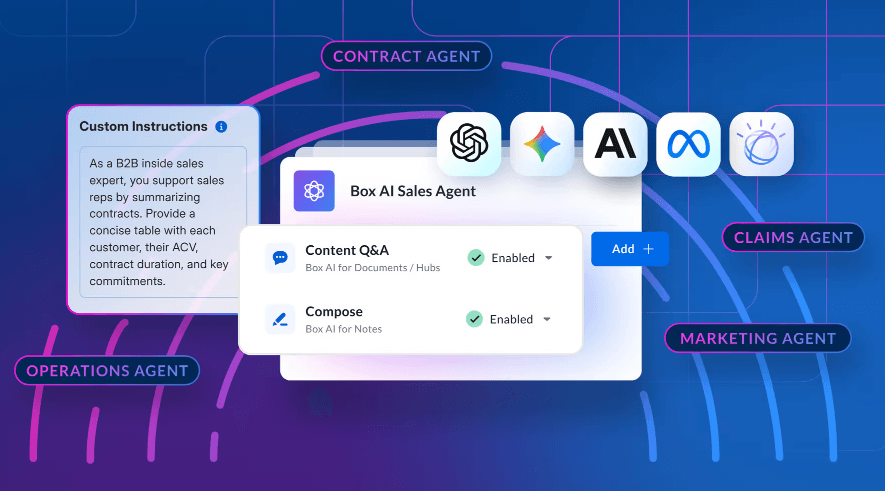

There's a better way: anchoring agentic memory with Box transforms vulnerable text files into managed, governed assets. This isn't simply moving data from point A to point B — it's an architectural shift that delivers enterprise security without sacrificing agent performance.

Here's how it works.

Identity-centric isolation: Keeping agents in their lane

Most open source agent security problems stem from privilege escalation — agents using broad local access to wander into sensitive areas they have no business touching. Hosting memory in Box gives you namespace isolation by default. With granular permissions and downscoped tokens, your IT team can ensure that each agent only accesses its designated memory folder. That way even if the agent's logic gets compromised, its physical reach is hard-limited by Box's folder structure. Think of it as giving the agent a key that only opens one door, no matter how clever it gets.

Forensic traceability: Opening the black box

When an agent makes an autonomous decision, you need to reconstruct exactly what it was "thinking" at that precise moment. Because every Box interaction is logged, you get an immutable audit trail that transforms AI from an inscrutable black box into a transparent, auditable business process. You're not just storing a MEMORY.md file anymore. You're creating a forensic ledger of machine reasoning that can be inspected, analyzed, and trusted.

Cognitive resilience: The undo button for your agent's brain

Agents are susceptible to "memory poisoning" where hallucinations or malicious inputs corrupt their future behavior in unpredictable ways. Box's native version history combats this vulnerability by essentially giving you an undo button for your agent's brain. If your agent starts exhibiting bias, making erratic decisions, or behaving suspiciously, you can roll its memory back to a known-good state. This kind of cognitive resilience is difficult with unmanaged local files.

Solving real-world friction

Moving an agent's memory to the cloud surfaces two immediate concerns, both of which have elegant solutions:

Latency: Calling a cloud API every time your agent "thinks" would introduce unacceptable lag. The high-performance approach? Treat Box as your authoritative source of truth while maintaining a local volatile cache. The agent pulls its memory from Box at session start, operates at local speed, then flushes updates back to the vault, either periodically or at task completion. You get governed data without sacrificing the snappy performance users expect.

Concurrency: In multi-agent environments, you might have two instances attempting to update USER_PREFERENCES.md simultaneously, which is a recipe for "brain corruption." Box's file locking feature prevents this by placing a temporary lock during updates, ensuring the agent's learning remains linear and consistent even when multiple agents are running in parallel.

A fundamental shift in how we think about storage

The transition to AI agents that act as delegates demands that we fundamentally rethink our relationship with content. Agent memory can no longer be treated as throwaway text files.

When you anchor persistent memory in a governed environment like Box, you're not just protecting files — you're protecting the integrity of the agent itself. You're building toward a future where AI isn't just powerful, but accountable, auditable, and resilient.