I’ve been spending a lot of time thinking about how security programs need to evolve to keep up with AI. The more I think about it, the more I find myself focusing on one question: what does “secure” actually mean when it comes to AI systems?

For most of the last two decades, “secure” in software wasn’t an absolute condition, but there was a clear model we could reason about:

- Defined boundaries

- Strong identity and access controls

- Known failure modes; and

- Layered defenses to keep risk within tolerance

AI changes this model. Not because the fundamentals go away, but because we’re now deploying systems that generate behavior dynamically, based on inputs we don’t fully control. This shift introduces new risks and, more importantly, changes the control plane. Boundaries are less clear, behavior is less predictable, and the link between identity, intent, and action starts to break down.

So does the current security model. We’re applying security approaches designed for deterministic systems to ones that aren’t, and the result is a new class of risks that don’t map cleanly to existing controls.

To make progress, we need to be more precise about what’s actually changing, and how to measure and secure it in practice.

What changes in AI systems

If we want to effectively secure AI systems, we need to be precise about what’s different — not just at a high level, but in how risk actually shows up. There are three key shifts that matter in practice.

1. Inputs are now part of the control plane

In traditional systems, inputs are something you validate. In AI systems, inputs shape behavior at runtime. Prompts, retrieved documents, system instructions, and tool outputs aren’t just data flowing through the system; they directly influence what the system does next. That makes them part of the control plane, not just the data plane. This is why prompt injection isn’t just another input validation issue; it’s a way to take control of system behavior.

What this changes:

- All inputs (including internal ones like retrieval) need to be treated as untrusted

- Controls are needed around how inputs are interpreted and acted on, not just whether they’re allowed in

- Testing shifts from validation to adversarial manipulation

2. AI systems can act with more privilege than the user intended

In a traditional model:

User → action → permission check

In AI systems:

User → model → system identity → action

In an AI system, the model interprets intent, and the action is often executed using a shared or over-privileged identity. This creates gaps between what the user is allowed to do, what they intended to do, and what the system actually does. Identity is still critical, but it’s no longer sufficient on its own; it needs to be paired with intent validation and enforced at the point of action, not just at authentication.

What this changes:

- Authorization needs to happen at the point of action, not just at session start

- Shared service accounts for AI-driven actions become a high-risk pattern

- “Acting on behalf of the user” needs to be explicit, scoped, and auditable

- You need to validate intent, not just identity

3. Behavior is probabilistic and failures are unpredictable

Traditional systems tend to fail in consistent ways. AI systems don’t; they can behave correctly most of the time but still fail in ways that are infrequent, hard to reproduce, and high-impact when they occur. Risk doesn’t show up as a constant signal; it shows up as outliers.

What this changes:

- You’re no longer asking, “Is this secure?”

- You’re asking, “How often does it fail under adversarial conditions?” and “How bad is the failure when it happens?”

- Security shifts towards continuous evaluation, runtime monitoring, and detection and containment

These shifts are what sit underneath most of the issues teams are running into right now, from prompt injection to data leakage to unintended actions.

And they point to a broader conclusion: Securing AI systems isn’t just about adding new controls; it’s about redefining what “secure” actually means in a system that can generate and act on behavior. This is where security programs need to evolve.

What “secure” means in an AI system

If the control plane has changed, the definition of “secure” needs to change with it. The traditional definition (protect access, reduce vulnerabilities, enforce controls) is still necessary, but it’s no longer sufficient.

Here’s a more useful way to think about this: An AI system is secure if it resists manipulation, enforces constraints on behavior, and makes failures visible, with identity and intent consistently aligned at the point of action.

That definition breaks down into four things you can actually reason about and measure.

1. Resilient to manipulation

The first question isn’t whether the model is “safe;” it’s whether the system can be manipulated.

AI systems are unusually sensitive to inputs. Prompts, retrieved documents, memory, and tool outputs aren’t just data flowing through the system; they actively shape what it does next. That means an attacker doesn’t need to exploit a traditional vulnerability; they just need to influence the right input in the right way.

That’s what makes things like prompt injection so effective. You’re not breaking the system, you’re redirecting it. In practice, this shows up less like a clean exploit and more like drift. The system gradually moves outside its intended behavior, often in ways that look reasonable on the surface. That’s part of what makes it hard to detect.

So the bar here isn’t, “We tested a few prompts and it looked fine.” It’s understanding how the system behaves under sustained, adversarial pressure. How easy is it to steer? What inputs actually influence high-risk actions? And how quickly do you detect when behavior starts to move in the wrong direction?

If you’re not actively trying to manipulate your own system, you don’t really know how resilient it is.

2. Constrained in its behavior

A lot of current AI security still relies on the model “doing the right thing.” That’s not a control. Models are good at following instructions most of the time. But “most of the time” is exactly where security breaks down. The edge cases are where the model is most likely to behave unpredictably.

The more important question is: what can the system actually do, even if the model gets it wrong?If the model decides to call an API, access sensitive data, or take an action on behalf of a user, what enforces the boundary? Is there a real control there, or just a well-written prompt?

Strong AI systems separate decision-making from enforcement. You let the model interpret and suggest, but you put hard constraints around what can actually happen:

- What tools it can call

- What data it can access

- What actions require additional checks

When those constraints are in place, a bad model output becomes a contained issue. Without them, it can become an incident. So the practical test is simple: if the model behaves incorrectly, how much damage can it actually do?

3. Observable in its failures

One of the more challenging shifts with AI systems is that failures don’t look like failures. The system doesn’t crash or throw an error; it produces something that looks plausible, but is actually wrong, unsafe, or out of policy.

This makes traditional security monitoring insufficient. You’re not just looking for system health, you’re trying to understand system behavior. You need visibility into how decisions are being made:

- What inputs the model received

- What context was included

- How that translated into an output

- What actions followed

The other challenge is detection. Many issues won’t be reported by users. and even when they are they’re hard to reproduce. So you need to be able to identify problems proactively. These issues show up in patterns of behavior that indicate the system is being misused or manipulated. A lot of security teams are still in the early stages of this evolution. Logging exists, but it’s not structured in a way that supports investigation. Monitoring exists, but it’s focused on uptime, not behavior.

If you can’t see how the system is behaving, it’s difficult to make meaningful claims about its security.

4. Consistent in identity and intent enforcement

This is where AI systems introduce one of the more subtle but serious risks. In a traditional system, identity and action are tightly linked; a user authenticates and attempts an action, and the system enforces whether they’re allowed to do it.

AI systems add an extra step in the middle. The user provides input, the model interprets that input, and then the system takes action, often using a different identity than the user themselves.

This is where things can start to drift. The model might misunderstand intent or be manipulated into interpreting it differently. And if the action is executed with a shared or over-privileged identity, you’ve created a path for the system to do something the user didn’t actually mean, or shouldn’t be allowed to do.

It’s not enough to know who the user is. You also need to validate what they actually intended, and ensure the system acts within that boundary. In practice, that means:

- Avoiding shared, overly broad identities for AI-driven actions

- Enforcing authorization at the point where actions happen

- Introducing friction for high-risk operations — confirmation, step-up auth, or both

- Making sure you can trace the full chain: user input → model interpretation → action

If you lose alignment between identity, intent, and action, you lose one of the core control mechanisms security has relied on for years. And that’s where small issues can turn into real incidents.

How security programs need to evolve

If you take those four properties seriously, this isn’t just about adding a few new controls to your existing program. It’s a shift in where control actually lives.

1. You need a control plane for behavior

Many organizations don’t have a clean or explicit way to answer a basic question: what is this system actually allowed to do?

Today, behavior is usually implicit. It lives across prompts, system instructions, application logic, and whatever guardrails the model provides. It works most of the time, but it’s not something you can clearly define, enforce, and audit the same way you would identity or access policies. That becomes a problem as soon as you assume the model can be wrong or manipulated.

What’s missing is an explicit control plane for behavior. You need clear definitions of what actions are allowed and disallowed, and more importantly, enforcement points that sit outside the model. The model can suggest or interpret, but it shouldn’t be the final authority on what happens. Once you start thinking this way, the question shifts from “what can this system access?” to “what is this system actually capable of doing?” That’s a more useful way to reason about risk in these environments.

2. Identity has to hold up at the point of action

Identity doesn’t go away in AI systems, but it becomes much easier to get wrong.

A common pattern today is that a user interacts with the system, the model interprets their request, and then an action is taken using a system-level identity with broad permissions. On the surface, it still looks like the user is in control. In reality, you’ve introduced a layer that can reinterpret intent and execute actions with more privilege than the user actually has.

This is where identity starts to lose its effectiveness as a control. It’s still present, but it’s no longer tightly coupled to the action being taken. To fix that, identity has to be enforced at the point where actions actually happen, not just when the user authenticates. That means using user-scoped or tightly delegated identities wherever possible, avoiding shared service accounts for AI-driven actions, and introducing additional checks for higher-risk operations.

You should be able to trace any meaningful action back to a specific user, what they asked for, and how the system interpreted that request. If that chain breaks down, identity is no longer providing the level of control you expect.

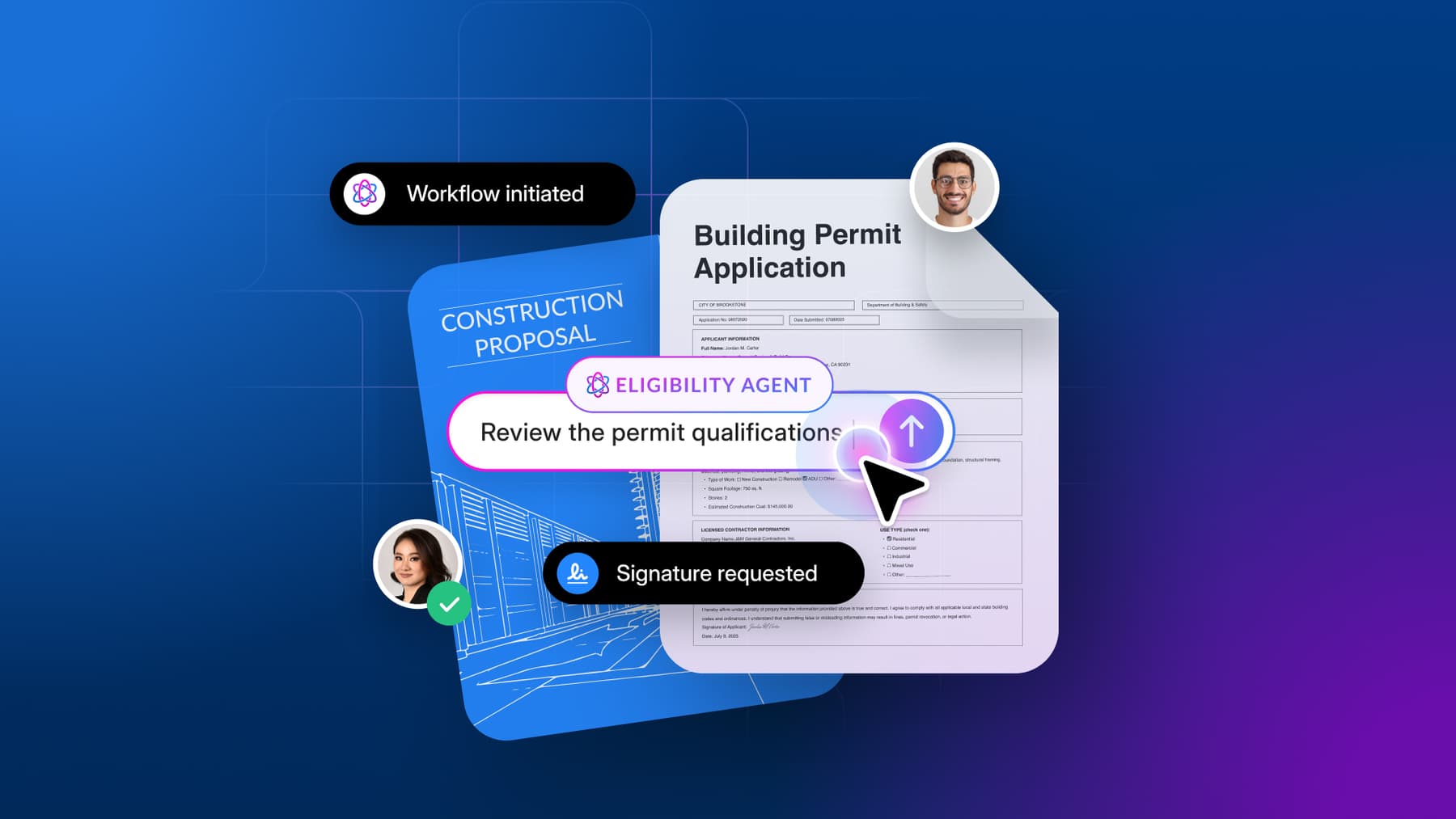

3. You need to validate intent, not just authenticate users

Even if identity is working correctly, there’s another gap that shows up quickly: the system may not be acting on what the user actually intended.

AI systems introduce a translation layer between input and action. The model takes natural language, interprets it, and turns it into behavior. That translation is inherently imperfect. The system has to interpret what the user meant and it doesn’t always get that right; sometimes it’s slightly off, sometimes it’s influenced by context you didn’t expect, and sometimes it can be manipulated altogether. This creates a new failure mode where actions are technically authorized, but still incorrect or unsafe.

Addressing it requires introducing some form of intent validation into your control model. In practice, that often means putting more structure around what the system is allowed to do, moving away from completely open-ended execution and toward defined actions with clear parameters. It also means adding friction for sensitive operations (confirmation, step-up authentication, or both) and making sure you can compare what the user asked for with what the system is about to do.

At that point you’re no longer just asking whether an action is allowed; you’re also asking whether it accurately reflects user intent, which is a different and equally important question.

4. Security moves to runtime

Another shift that becomes clear pretty quickly is that these systems don’t stay static after deployment. They evolve based on how they’re used — new prompts, new data, new integrations, and sometimes even changes to the underlying model.

Those changes affect behavior in ways that are difficult to fully predict ahead of time, which means that point-in-time assessments are no longer sufficient; a lot of the meaningful risk only shows up in production, under real usage. That pushes security closer to runtime.

You need visibility into how the system is actually behaving, not just how it was designed to behave. You need to detect when it starts to drift and whether that’s due to manipulation, misuse, or just edge cases emerging at scale. And you need ways to respond that don’t involve shutting everything down. In practice, this starts to look like continuous evaluation, where you’re monitoring behavior, identifying patterns, and tightening controls over time.

5. Metrics need to reflect how these systems fail

One of the more practical challenges is that most existing security metrics don’t capture this shift very well.

You can have strong fundamentals (solid patching, good access control hygiene, clean audit results) and still have an AI system that can be manipulated or pushed into unsafe behavior. That’s because those metrics were designed to measure a different kind of risk.

What you need instead are metrics that reflect how these systems actually fail in practice. That includes how often the system can be steered away from its intended behavior, how well guardrails hold up under adversarial conditions, how frequently it produces outputs or takes actions that violate policy, and how quickly those issues are detected and addressed.

These aren’t clean, binary measures; they tend to be probabilistic and directional. But they provide a much more accurate view of your exposure than traditional indicators.

The shift

If you zoom out, this isn’t just about new risks or new controls — it’s a change in what we’re trying to secure.

For a long time, security has been grounded in protecting systems and controlling access. That model still matters and it’s not going away, but it assumes that systems behave in relatively predictable ways.

AI starts to break that assumption.

We’re now deploying systems that interpret input, make decisions, and take action, often based on context and signals that are hard to fully enumerate ahead of time. The risk isn’t just that someone gets access to something they shouldn’t; it’s that the system does something it shouldn’t, even when access controls are technically working as designed.

What I keep coming back to is that “secure” has to account for how systems behave under real conditions — whether they can be manipulated, whether their actions are meaningfully constrained, whether identity and intent stay aligned, and whether you can see and respond when things start to drift.

This is a higher bar than most security programs were designed for, but it’s also a more accurate reflection of the systems we’re building now, and the ones we’re going to be responsible for securing going forward.