Corporate legal teams across industries are moving from AI exploration to everyday use.

A March 2026 report from FTI Consulting shows that gen AI adoption in corporate legal departments had nearly doubled in a year. According to this survey, legal teams are most often using AI for summarization, research, e-discovery, document review, contract drafting, and contract analysis. According to another 2026 report by GCAI, in-house general counsel are saving an average of 14 hours a week thanks to AI.

Yet, the risk of misuse of generative AI is huge, and so are the fines. In late 2025, the Sixth Circuit Court of Appeals sanctioned two lawyers over $100,000 for citing hallucinated cases in their appellate briefs. Within corporate legal organizations, the expense can be much greater, both financially and in terms of business reputation.

While AI adoption accelerates, governance can be the missing piece. Without the right controls in place — content permissions, classification, auditability, and provenance — legal teams cannot use AI securely and trust its outputs. Maninder Sagoo, VP of Box’s Commercial Legal team, explains that this gap creates a precarious environment where the very tools meant to drive efficiency can also expose a company’s most sensitive content.

What to know:

- “Good enough permissions” are simply not good enough in the modern world of AI

- Governance has to move from policy to technical enforcement — high-level principles to operational controls at the content layer

- AI agents should be grounded in internal legal documentation, approved playbooks, clause libraries, prior agreements, and business rules

The end of “good enough” permissions

For years, many legal teams operated with “good enough” permissions: folder structures slightly too open, but protected by the natural friction of legacy systems. If a document was buried 10 folders deep, the risk of accidental discovery was low simply because finding it required time, context, and persistence. Call it the security-by-obscurity model.

Without the right controls in place — content permissions, classification, auditability, and provenance — legal teams cannot use AI securely and trust its outputs.

AI breaks that model. AI indexes and retrieves content instantly, no matter how deeply it’s buried, in response to natural-language prompts, removing the friction that once masked imperfect permissions. Users no longer need to know where a file lives to find it. They can simply ask for a summary of recent compensation discussions, sensitive matter files, or internal negotiations, and the system will retrieve what its permissions allow.

In that sense, poor permissions become an exfiltration plan. An exfil plan isn’t necessarily intentionally malicious, Sagoo says, but it’s the condition where weak controls and poor governance let AI inadvertently expose or remove sensitive information. If the model can reach too much, it can reveal too much. Old permission gaps are no longer hidden operational weaknesses; they become active exposure paths.

Governance has to move from policy to technical enforcement

To close the gap, Sagoo explains that legal teams must move from high-level principles to operational controls.

First, they need automated classification. Sensitive content such as PII, financial information, privileged communications, and deal documents should be identified and labeled automatically so downstream security policies can be enforced consistently.

Second, ethical walls and granular access controls. For corporate legal teams, that means ensuring that matter-specific information, internal investigations, M&A work, executive issues, and compensation-related content are segmented appropriately and not merely buried in nested folders.

Third, retention and legal-hold workflows that are AI-aware. AI can help identify sensitive repositories and support governance actions, but those actions must still be tied to formal records and legal processes.

Fourth, auditability and provenance. It’s no longer enough to know that a user opened a file. Organizations increasingly need to know how an AI-generated answer was produced, which documents were used, and what chain of access led to the output. The content layer must provide unified governance, audit trails, and compliance frameworks for AI-generated work.

Governed agentic AI for contract reviews: The Box approach

Beyond using AI for one-off tasks like legal research and contract analysis, the next wave of AI in legal departments will be using it to execute multiple steps in sequence and automate entire workflows. Such agentic workflows can span document generation, review, negotiation, signature, and storage.

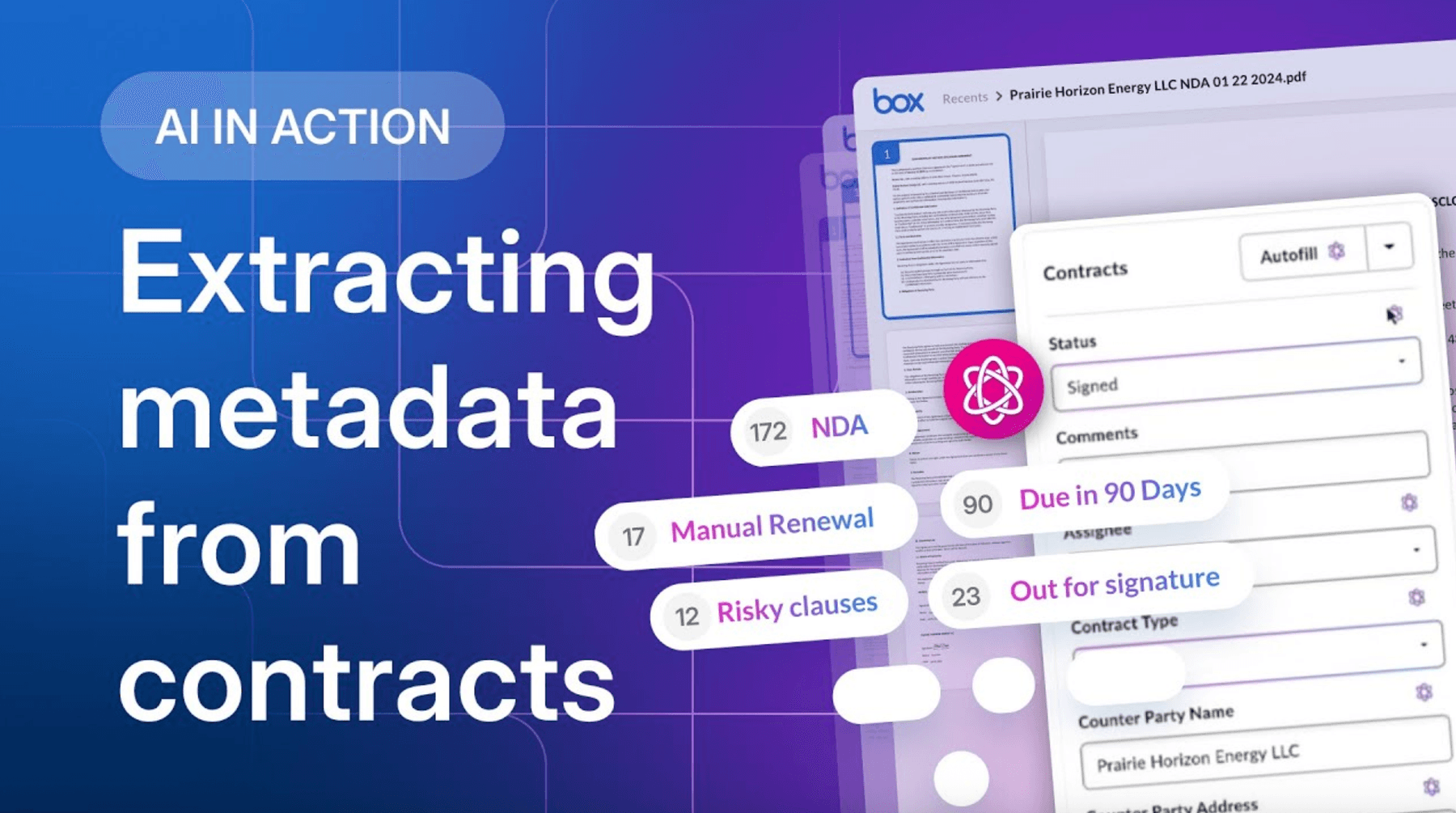

AI agents have the power to extract contract metadata from intake forms, identify high-risk clauses, and track signature status and risk in dashboards — just one example of a common legal workflow that can already be automated by AI.

Here at Box, we’re in the process of applying agentic AI to our contract review process. Reviewing a Main Service Agreement (MSA) on customer paper can currently take as long as eight hours. By automating with custom AI agents, Sagoo’s team aims to cut this to one hour — an 87.5% reduction.

It’s important to note that in this paradigm, the Box AI Agent won’t operate as a free-floating assistant. It’s grounded in internal legal documentation, approved playbooks, clause libraries, prior agreements, and business rules — all of which the agent has access to and is using as core knowledge upon which it relies to complete its work. In practice as well as theory, the agent only accesses content it’s permitted to see.

“The agent is working on your instructions,” Sagoo explains. “It’s not making up its own risk assessment. It’s working with knowledge of your corporate documentation, your risk profile, your business model.”

In other words, the agent is both restricted (it can’t see everything) and knowledgeable (but it can access what’s relevant).

That distinction matters. The goal is not just faster AI, but governed AI.

Governance will define the next phase of legal AI

AI success in legal organizations will not be defined by whether teams can generate summaries or draft redlines with AI tools. Many already can.

Sagoo identifies the differentiator as being whether they can string legal tasks together into automated agentic workflows — and do it in a way that’s secure, auditable, and operationally trustworthy.

AI offers corporate legal teams a genuine opportunity to elevate legal’s strategic role in the business, but that opportunity only compounds if governance keeps pace. This means pairing adoption with classification, granular permissions, ethical walls, auditability, and clear provenance. Governance isn’t a brake on AI, it’s the infrastructure that makes AI trustworthy enough to scale.

To learn more, check out how Agentic AI solves content classification in an accelerating world.