AI is easy to try and hard to use well. Most people start with a simple consumer app like Claude or ChatGPT, or a feature within an app they already use, where the risks feel distant. But the stakes change once AI enters the workplace, where sensitive data, customer trust, internal controls, and business judgment all come into play.

Organizations now have the tools to use AI in every line of business. But are they ready to use it responsibly?

In a recent conversation with Jeff Chambers, VP of IT Technology at WongDoody, a global creative agency, we talked about the challenge of moving from curiosity and experimentation to real business value without creating new risks.

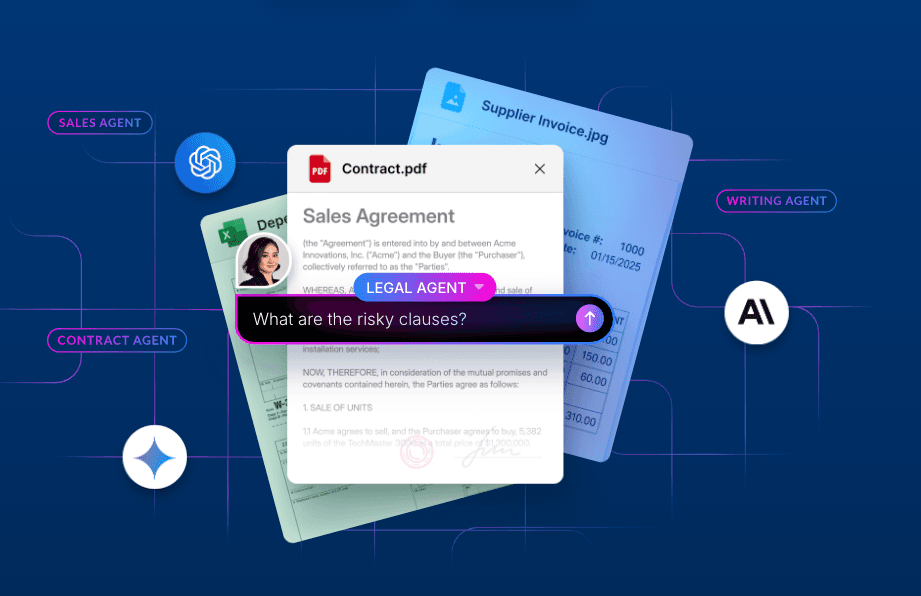

Among other applications, WongDoody has used Box AI Studio to build custom AI agents that automate response to requests for proposals, taking the time spent on such tasks from 24 hours to about 15 minutes. Chambers' approach to AI at WongDoody is both curious and careful. Here’s what he’s learned.

Key takeaways:

- Becoming an AI-first enterprise starts with trust, governance, and strong security foundations before speed or scale

- AI creates the most value when it supports human judgment instead of replacing it

- Successful AI adoption is a gradual process of testing, review, and change management

The first thing that breaks is security

A lot of public discussion about AI still starts with productivity. How much faster can teams work? How much more content can they produce? How many tasks can be automated?

For Chambers, becoming AI-first is not about chasing the newest model or the biggest productivity leap from the get-go, but putting the right structure around technology so people can use (and scale) it with confidence.

That’s because, as he says, “The very first thing that typically breaks is security.”

Consumer AI use doesn’t translate well to the workplace, where AI can touch confidential files, regulated information, and customer content. AI may feel simple on the surface, but enterprise use depends on much more than access to a model. Businesses must think through permissions, privacy, oversight, and accountability before the tools spread too far.

Governance makes experimentation useful

Governance is sometimes considered a bottleneck when it comes to enterprise AI innovation, but Chambers argues that governance is the foundation of both experimentation and scaling in production.

He describes an approach that allows teams to explore new tools in a controlled environment while putting them through review. “We’re never going to scale anything unless it goes through three or four reviews,” he says.

That may sound cautious, but it’s also realistic.

Responsible AI is a working discipline. As long as technology keeps evolving, so will governance.

AI tools are changing too quickly for a one-time policy to be enough. New models arrive constantly. Capabilities improve. Risk changes. What appeared acceptable a few months ago may need a second look today. Sequential reviews examine what’s allowed, what’s restricted, what data can be used, where a model can run, and how decisions are enforced.

Responsible AI is a working discipline. As long as technology keeps evolving, so will governance. This is critical at WongDoody, where AI agents have to keep content compliant with ISO 42001, data residency requirements across four regions, and cybersecurity insurance standards.

The foundation matters more than the model

Along with governance, Chambers returns again and again to the basics: where data lives, how access is structured, which systems connect to each other, and whether permissions actually reflect how the business wants information to move.

Many organizations focus too much on picking the right model and not enough on fixing the systems around it, and his warning is simple. AI will work with the access it’s given. If a person can reach content, the system may be able to reach it too.

AI does not erase weak foundations but actually exposes them — and, in some cases, accelerates their failure.

Remember, AI does not erase weak foundations but actually exposes them — and, in some cases, accelerates their failure.

Flashy pilots aside, the companies best positioned to use AI well may be the ones that have first done the harder work of cleaning up access, simplifying content systems, and rethinking how sensitive information is handled before they scale anything.

The right place for AI: Innovation, not automatic judgment

Now for the innovation part.

One of WongDoody’s strongest returns on AI exploration has come from using it to help evaluate and respond to requests for proposal (RFPs) and requests for information (RFIs). With AI, teams can easily ingest long proposal documents, assess whether an opportunity fits the business, and generate a draft response quickly.

This type of use case shows where AI can be genuinely valuable: not as a replacement for judgment, but as a way to sharpen it. AI can help create a more objective first pass at RFPs and RFIs by assessing fit, scope, and potential value.

But Chambers is careful not to overstate what that means. “We always have a human not just in the loop, but in the lead,” he says. That distinction is important. The point is not to hand over decisions. The point is to give people better support as they make them.

We always have a human not just in the loop, but in the lead.

The phrase “human in the lead” suggests something stronger than a final review step. It means people define the purpose of the tool, test it, monitor it, evaluate its output, and decide when it needs to change. AI can assist, accelerate, and inform, but it should not become the owner of the process.

That standard matters for trust inside an organization as much as it matters for accuracy. Employees need to know that AI is there to help them do better work, not to replace thought, responsibility, or care. Chambers makes clear that, in his environment, AI is a tool for efficiency, not a substitute for the people doing the work.

That framing is especially important in creative and knowledge-heavy fields, where quality, judgment, and context still depend heavily on human expertise. AI may help teams move faster, explore more options, or process more information. But the final answer still needs human ownership.

The next phase of AI will require patience

Many organizations still underestimate how much discipline this transition requires — both in terms of the time it will take to get real returns from AI and the amount of change management involved in that process. Too much of the current conversation still treats AI adoption like a switch that can be flipped. In reality, it looks more like a long process of testing, learning, reviewing, and adjusting.

The smartest AI leaders are asking:

- What should be governed before AI scales?

- Which use cases are real and which are just interesting demos?

- How should sensitive content be protected when AI systems can surface information so quickly?

AI at work will be shaped not just by better and better models but by whether organizations can build trust around how those models are used. That’s the real work of becoming AI-first: not just moving fast, but building the kind of foundation that lets you move with confidence.