How to scale the expertise of the best people across the organization is an age-old problem. Adobe is solving it not just by securely applying AI to its corpus of corporate knowledge but by training AI to think like its top experts and decision-makers.

Every organization has people who know things that nobody else knows: The lawyer who’s seen every variation of a contract dispute. The engineer who remembers why a particular system was built a certain way. The salesperson who understands exactly what makes a specific customer tick. But how do you make what your best people know available to everyone else? Documentation and training programs help. But the experts remain bottlenecks, and their knowledge often walks out the door when they leave.

E.A. Rockett, Vice President — Legal Technology & Product Innovation, has spent years thinking about how technology can amplify (rather than replace) human expertise. In a recent Box AI First Podcast conversation with Box Chief Customer Officer Jon Herstein, Rockett shared how Adobe is approaching the challenge of retaining and using all that expert knowledge — along with his ethos that investing in technology attracts stronger talent in the first place.

Key takeaways:

- AI adoption is a talent strategy: Access to cutting-edge tools attracts and retains top performers — enough of a reason to invest in technology on its own

- Governance should enable, not block: Adobe’s structured “A through F” framework guides AI initiatives toward safe implementation

- AI extends expertise; it doesn’t replace it: By capturing both how top experts think and what they avoid doing, organizations can scale specialized knowledge across the entire workforce

AI adoption is fundamentally a talent strategy

Organizations that facilitate or restrict access to modern tools signal something about their values either way, and talented people notice. As Herstein quips, "Show me your technology stack and I'll show you your culture."

Show me your technology stack and I'll show you your culture.

While many organizations focus their AI efforts on productivity and cost reduction, Rockett believes AI adoption’s biggest impact could be in attracting and retaining top performers. "AI players want to be at a place where they get to play with cutting edge technology," Rockett says. "It’s part of our culture to let people play with these shiny objects and see what they can do with them."

But the flip side is equally important: "Whatever cost optimizations, efficiency, and productivity you thought you could scrape up from technology is nothing compared to the value of an A player walking out the door.” So as an early and abiding technology leader, Adobe is committed to fostering an AI-forward culture where experts are not just attracted to the company but incentivized to stay.

Adobe’s framework for prioritizing AI projects

Rockett's enthusiasm for this moment in technology is palpable. "We're at a special moment in time," he explains. "The longer the trajectory is to get from idea to what we want, it kind of starts to get diluted along the way. The quicker you can solve it — get that first iteration, and then fail forward the way that we often talk about — that’s key."

What excites Rockett most isn't efficiency gains or cost savings, though those matter. It's the ability to move from concept to working solution faster than ever before. "I'm excited because it's the first time we're seeing that velocity of iteration we always wanted," Rockett says, "and that velocity of iteration is also allowing personalization at scale."

When Adobe decided to enable AI across its 30,000-person workforce, the company faced the same question every large organization does at this dramatic moment: How do you move fast with AI without creating unacceptable risks?

Adobe’s solution was to create a structured framework they call "A through F." Each letter represents a key consideration:

- Which team is involved

- What technology they're using

- What data goes in

- What output they expect

- Who the audience is

- What the overall objective is

Taking the answers to all of these questions into consideration, decision-makers can then decide which AI initiatives to pursue. There’s no hard-and-fast formula to follow, but decision-making based on experience and expertise. Rockett explains, "It's not about good use cases, bad use cases, risky use cases, not risky use cases. You're taking those six elements and dialing it in.”

Implementation decisions can then be made according to the specific AI use case, as Rockett describes: “For this use case, don't use this technology; use this other technology. For your input data, can you use non-confidential information?"

The goal isn't to block initiatives but to guide them toward safe implementation. This approach positions data governance as an enabler rather than a gatekeeper. As Herstein notes, "What you're trying to do is understand what the business is trying to accomplish and then help guide them and establish guardrails as opposed to saying, 'You can't do that'.”

Extending the reach of expertise without losing the experts

Different people need different things. A lawyer working on antitrust issues has different needs than someone in sales. Previously, building custom solutions for each group required significant investment. But with recent technology innovations, AI customization has become practical.

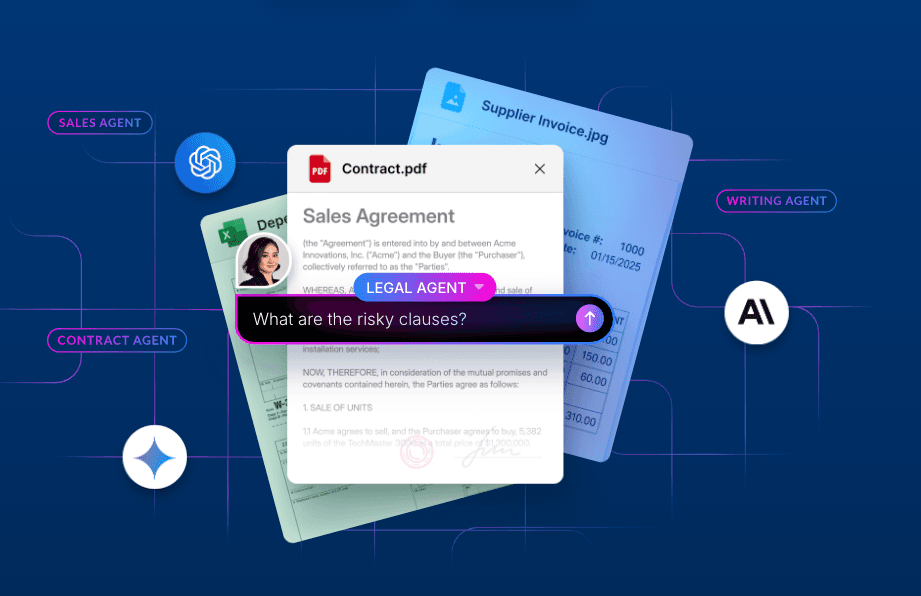

When ideating any AI pilot, Adobe follows a five-layered approach that merges technological capability with the expert knowledge component, answering a question Rockett poses as “How does human plus machine translate in this AI atmosphere, in this AI world, and in enabling great AI solutions?”

The five layers Rockett uses to design an AI pilot:

- The first is the foundational AI model itself, which might be a particular LLM or a diffusion model

- The second is subject-matter-specific knowledge: all the proprietary institutional knowledge you can’t access unless you’re able to apply AI to your internal corpus of content

- The third layer captures how an expert would approach a problem

- And on the flip side of this, the fourth layer documents what an expert would never do — the mistakes they've learned to avoid

- Finally, the fifth layer uses AI to generate effective prompts based on all the previous layers

"Creating this foundation gives you a solid structure," Rockett explained. "Now, somebody who may not be an expert can start doing runtime stuff, asking AI questions as if the expert were in the room."

By combining layers of AI capability with human expertise, AI doesn't replace experts — it extends their reach. The antitrust lawyer's knowledge becomes available to colleagues who need quick guidance. The finance expert's judgment can inform decisions across the organization.

Just get started

For organizations still figuring out their approach to AI, Rockett offers the same practical advice we hear from every AI-forward leader today: Get your hands dirty. "This is not something that someone on my team can brief me on," he says. "I need to roll up my sleeves. I need to be able to have those intelligent conversations."

The organizations that will thrive with AI are the ones that can figure out how to make their best people even better — and to make that expertise available to everyone.

For more insight, read AI-First Transformation: Box's Principles, Strategy, and Execution Framework, the first in a series about Box’s AI methodology — the frameworks for AI principles, governance structures, and value realization that enabled Box to move from experimentation to strategic execution.

Watch the full conversation with Adobe below.