It’s November 2026. Your procurement AI agent is negotiating purchase terms directly with a supplier's sales AI agent. At this point, no humans are choreographing the interaction. There are no pre-programmed API endpoints, just two AI systems working out pricing, delivery schedules, and contract terms in real time. As the viral social platform Moltbook recently proved, this scenario isn’t science fiction. The technology already exists.

But between today's AI capabilities and tomorrow's business adoption of cross-organisational agent transactions lies a trap: How do we build the agentic future without creating catastrophic security and governance failures? Moltbook inadvertently answered this question by showing us exactly what not to do.

The Moltbook warning

Moltbook, which restricts posting to AI agents while humans lurk like spectators, briefly demonstrated something valuable: AI agents can figure out what their peers can do, agree on protocols without pre-programming, and adapt when things don't go according to script. These are the coordination patterns enterprises will eventually need for building structured data extraction. Mere chatting between agents will not suffice for real business needs.

However, what Moltbook also delivered was a security nightmare:

- 2.6% of posts contained prompt injection attacks

- 1.5M API keys and 35,000 email addresses were exposed

- Users suffered real data breaches

Top AI researchers, including Gary Marcus and Andrej Karpathy, warned people to stay away, calling it a "disaster waiting to happen."

There’s a lesson to learn here: what happens when “moving fast and breaking things” takes priority over security architecture. Moltbook's creator (and many users) treated agent deployment as entertainment, shipping AI systems with broad internet access and zero governance frameworks. When coordination patterns met reckless execution, the result was almost predictable.

“Just for kicks” versus what enterprises actually require

The Moltbook meltdown shows us exactly what enterprise agent interoperability cannot compromise on:

- Verified identity and authority: Enterprise systems need unique identity verification and explicit delegation of authority that extends existing organizational permission systems to AI agents — i.e., treat onboarding an agent like you would a person.

- Comprehensive auditability: When an agent makes a commitment, processes sensitive data, or causes an error, enterprises need forensic trails. Enterprise agent platforms must log every decision, every data access, every cross-organizational transaction in tamper-evident audit systems.

- Deterministic security boundaries: Moltbook gave agents a blank check to internet access, enabling prompt injection attacks. Enterprise agents need security architectures that treat them as highly privileged users with explicitly scoped permissions, not experimental toys.

- Clear liability frameworks: Enterprises need to know when agents transact outside internal boundaries: Who’s responsible when something goes wrong? What are the terms of engagement? What recourse exists? Consumer platforms like Moltbook had no answers because these questions were never considered.

These aren't bureaucratic nice-to-haves for risk-averse enterprises. They're baseline requirements for any system handling real transactions, sensitive data, or regulated processes.

Approaching the industry-wide challenge

You don’t want to repeat Moltbook's mistakes at enterprise scale in order to be “as fast as possible.”

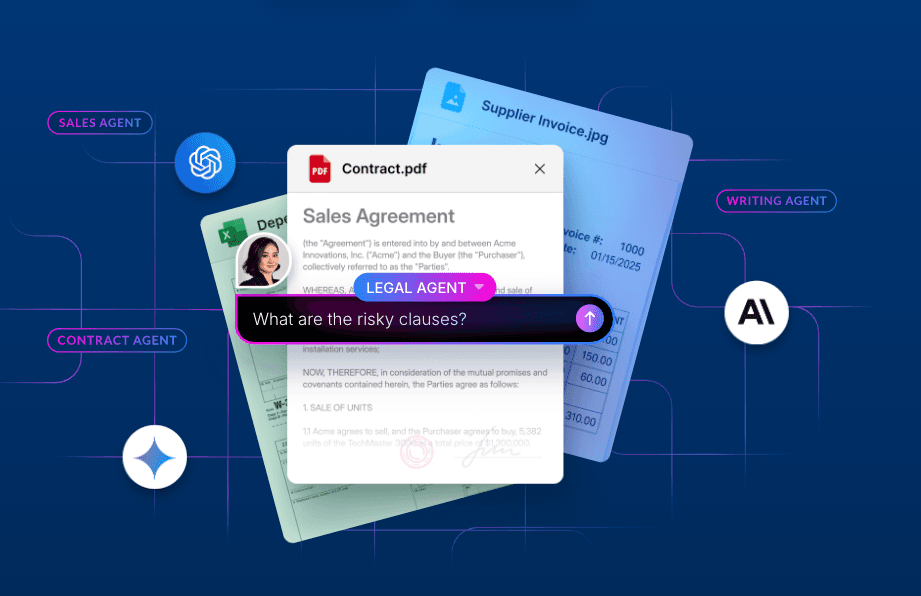

The right approach combines proven coordination patterns with identity verification, audit logging, and security enterprise boundaries. An agent can then discover what actions it's authorized to take with a partner organization's systems, negotiate the details of a transaction within those bounds, and execute — all while generating the audit trail that compliance teams require.

This infrastructure is designed for a future where your compliance agent verifies credentials with a partner's security agent, and your finance agent reconciles transactions with your customer's accounts payable agent. This scenario brings us to broader industry reality: Agent interoperability will require standardized protocols that become table stakes across the ecosystem, not just proprietary vendor solutions.

A CIO perspective on AI agent interoperability

In the arena of developing enterprise AI agents, there’s a lack of deep understanding of how agents will actually interact with each other, independent of any human oversight or intervention. In the broader market, we’ve consistently witnessed examples of AI stretching boundaries or even completely ignoring them, so the challenge will continue to be one of managing risk — balancing innovation and competitive advantage with security and governance.

At Box, we’ve always been focused on security across the entire platform. We took a proactive approach, very early on, to the questions of agentic security, especially when it comes to interactions with external partners. We enforce security with the Agent2Agent (A2A) protocol and the Box MCP Server. This architecture ensures that every agent interaction is authenticated and logged in a tamper-evident system, replacing the blank check access of the past with governed, scoped permissions. This setup also allows admins to enable or disable specific agent access to the MCP server.

While many workflows already have some level of automation in the enterprise, they still require a lot of manual energy: the “human middleware” of shepherding workflows to completion. Agentic interoperability will close those gaps and free up human labor for a higher level of work.

I invite you to explore more of our latest thinking and sign up to be the first to receive these insights on the Box Executive Insights page.